Hi community!

I’m David Katus and I'm incredibly excited to share that my project has been selected as one of the 10.

I will be building a fully offline, multi-camera, gesture-controlled smart home interface.

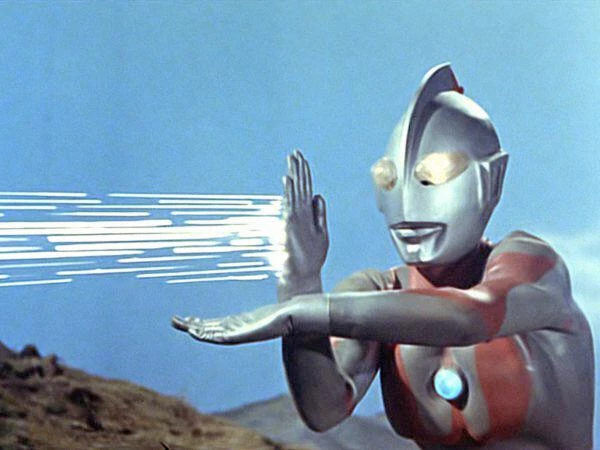

The goal is to control everyday devices—TV, lights, speakers—using only intuitive hand gestures and pointing motions. By combining edge AI gesture recognition with simple device actions, I aim to create an interaction experience that feels natural, seamless, and futuristic.

This project matters to me because I’ve always been fascinated by natural human–machine interfaces. Ever since I first saw Iron Man controlling his workshop with mid-air gestures, I’ve wanted to bring a small part of that experience into real life. With edge AI, this finally becomes practical—while keeping all processing private and completely local.

Most smart devices today rely on remotes, apps, menus, or voice assistants. They work, but they are not always intuitive.

My system aims to improve this through:

• Hands-free control from anywhere in the room

• A unified gesture language across all supported devices

• Object selection by pointing, enabled through multi-camera spatial understanding

• 100% offline processing, ensuring full privacy

This approach is ideal for living rooms, studios, workshops, or any environment where people interact with multiple devices and want a more natural, hands-free control method.

My first steps will be getting familiar with the Axelera DevKit and testing basic camera + inference performance.

I’m thrilled to begin this journey with Project Gaius and will keep you updated on my progress.