Trying new AI hardware typically means weeks of integration work before you can tell if the hardware is even worth it. There’s a new compiler, new runtime, and new preprocessing quirks. By the time you've ported your model and built the surrounding pipeline, you've invested a quarter and still don't know if the accuracy holds up.

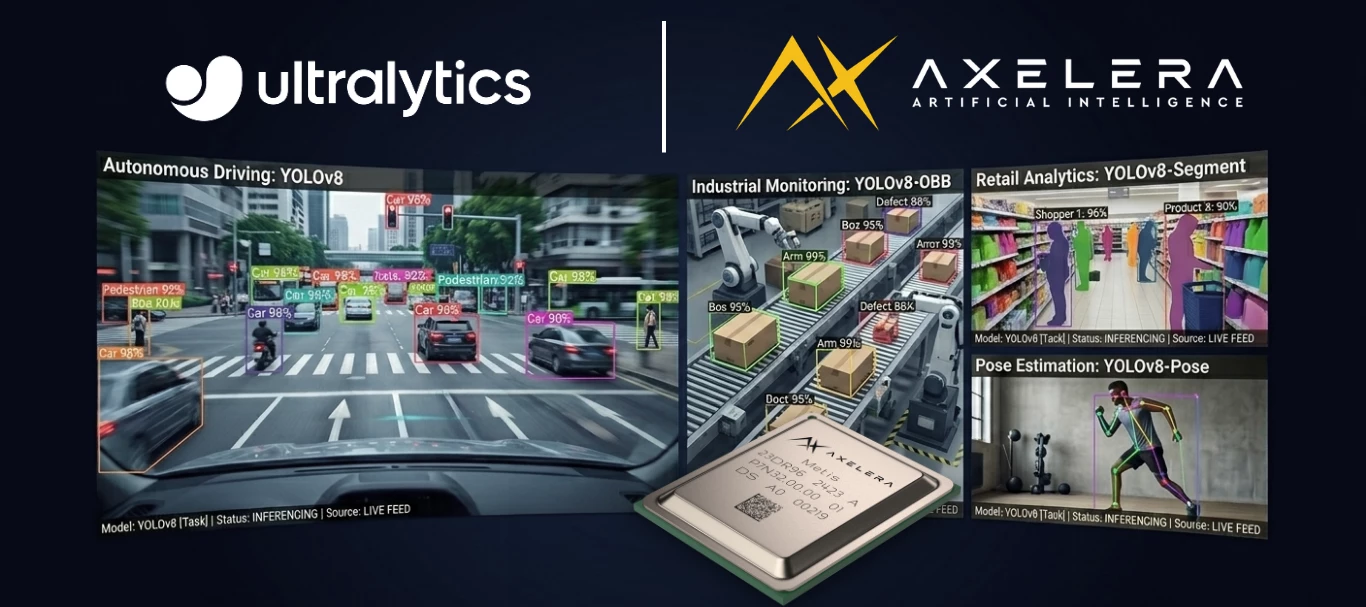

The Ultralytics and Axelera® AI integration removes that barrier. If you train with Ultralytics, you can export to Axelera’s Metis® AIPU, validate accuracy, and run a working application, all without leaving the tools you already know. Once you’re running on Metis with the Voyager® SDK, you can tap into the high performance and low power needed to solve your edge AI inferencing challenges. With four independently programmable cores, you can run models in parallel, easily build an end-to-end pipeline, implement adaptive tiling for high resolution inference, and scale your hardware without reworking your pipeline configuration.

Export: One Command

from ultralytics import YOLO

model = YOLO("yolo26n.pt")

model.export(format="axelera")That's it. The export compiles and quantizes your model to int8, producing an .axm file optimized for the Metis AIPU. No retraining. No architecture changes. No hardware expertise required to get started.

The integration supports detection, pose estimation, instance segmentation, oriented bounding box (OBB), and image classification across Ultralytics YOLOv8, Ultralytics YOLO11, and Ultralytics YOLO26 models. If you've trained it with Ultralytics, it exports.

Validate: Know Before You Commit

Accuracy is the first question every machine learning (ML) engineer asks when evaluating new hardware, especially hardware that quantizes to int8. And historically, answering that question has been painful. Most vendors publish model zoo benchmarks, but those benchmarks use the vendor's own validation pipeline, which may not match your training framework's baseline. The comparison isn't apple-to-apple. And it's not your model; it's a reference model with reference weights. You still don't know if your specific model retains accuracy on that hardware.

The Ultralytics integration changes this. You validate with the same yolo val you already trust:

yolo val model=yolo26n_axelera_model data=coco.yaml

yolo predict model=yolo26n_axelera_model source=test_image.jpgTwo checks, both using tools you already know:

- Quantitative: yolo val runs your validation or test set and gives you mAP numbers for the Metis-compiled int8 model compared directly against the same baseline you trained with. No separate tooling is required and it doesn’t use proxy benchmarks.

- Qualitative: yolo predict runs inference on your own test images so you can visually inspect the results. Does it look right on your data?

Metis is designed for high-accuracy int8 inference. The hardware and compiler use mixed-precision techniques under the hood to preserve accuracy where it matters most, while keeping int8 throughput. You can verify the results yourself in minutes rather than taking our word for it.

The takeaway: you can evaluate the Metis AIPU for your use case in an afternoon instead of a quarter. If the accuracy numbers work, then keep going. If they don't, you've lost hours, not months and you can focus on optimizing for your use case.

Build: From Export to Application

You have a compiled model. Now how much code does it take to build a real application around it?

This is one of the hello-world examples that ship with Ultralytics. It includes pose estimation with multi-object tracking, and it’s ready to run:

from axelera.runtime import op

pipeline = op.seq(

op.colorconvert("RGB", src="BGR"),

op.letterbox(640, 640),

op.totensor(),

op.load("yolo26n-pose.axm"),

ConfidenceFilter(threshold=0.25),

op.to_image_space(keypoint_cols=range(6, 57, 3)),

op.tracker(algo="tracktrack"),

).optimized()

for frame in video:

tracked_poses = pipeline(frame)

for pose in tracked_poses:

draw_skeleton(frame, pose)That's the entire pipeline: preprocessing, inference, postprocessing, and tracking in just 15 lines of Python. You don't need to be a deployment expert. Voyager SDK's Pythonic pipeline builder handles the low-level orchestration, so all you need to do is describe what happens at each stage.

For production, the pipeline builder runtime (axelera-rt) is lightweight. It doesn’t require PyTorch, CUDA, or a heavy ML training stack. It has the runtime your application needs for edge AI. When you move from evaluation to deployment, you drop the training dependencies entirely.

Voyager SDK includes both a YAML-based pipeline builder for production deployments and this newer Python API. The Python path is the natural fit for ML engineers coming from Ultralytics because it reads like the model pipeline you would sketch on a whiteboard.

Each operator does one thing:

op.seqchains them.optimized()fuses adjacent operations for speed- the tracker adds persistent identity across frames (switch algorithms by changing a string)

ConfidenceFilteris a custom operator (you subclassop.Operator, and then writea __call__ method).

The pipeline isn't a black box, so you can insert your own logic anywhere in the chain.

What Production Looks Like at Scale

The same pipeline patterns you just saw scale far beyond a single camera.

At ISC West 2026, we ran a live demo processing two 8K camera feeds and one 4K feed simultaneously on three Metis 4-chip PCIe cards. The system ran 48 parallel model instances covering person detection, pose estimation, face recognition, weapon detection, and PPE identification, all in real time.

This is a developer blueprint for what production edge AI looks like at scale. It runs on the same Voyager SDK and Metis AIPUs available today. The hardware scales from a single-chip M.2 module to a four-chip PCIe card, but your pipeline code stays the same. When Axelera's next-generation Europa AIPU ships, it runs on the same SDK.

Read the full technical breakdown in the ISC West 2026 blog post.

Why Metis Is Built for This

Running demanding workloads at the edge requires hardware engineered for exactly that. Metis is built on Digital In-Memory Compute (D-IMC) architecture, which brings computation directly to where the data lives rather than moving data to a separate compute unit. The result is more performance per watt, which matters when you are deploying to edge environments where power budgets are real constraints. Four independently programmable cores give you the flexibility to run four different models in parallel, or to mix and match single-model and cascaded configurations depending on what your application needs.

Voyager SDK is designed to meet you where you are: a clean Python API and YAML pipeline builder for straightforward deployments, and lower-level access for developers who want to go deeper. Features like adaptive tiling let you run accurate inference across multiple 8K video streams without retraining your models. The same SDK that runs on a compact M.2 module scales to enterprise and edge server deployments without changing your pipeline code.

One platform. Infinite possibilities.

Get Started

- Follow the Ultralytics Axelera integration guide for setup (firmware, SDK installation, export, and validation)

- Run the hello-world examples (pose tracking and instance segmentation, ready out of the box)

- Explore the Voyager SDK on GitHub for the full model zoo, pipeline examples, and documentation

Tell us what you're building. We'd love to hear in the comments.