BLOG

Keep up to date with the latest news, information and updates from the Axelera team

- 42Items

- Home

- Blog

NewsApr 14, 2026

Voyager SDK: New Pipeline Builder and More

The latest version of Voyager® Software Development Kit (SDK) is here and this release touches nearly every layer of the stack. Whether you're deploying on new hardware, building custom inference pipelines, or are just tired of wrestling with installation scripts, there's something in this release for you.Release Highlights for version 1.6Top 3 highlights: Support for the new Axelera Metis® M.2 Max Install Voyager SDK with a single line of code Build complete inference pipelines in pure PythonAlso new in this release: New models added to the model zoo Track multiple objects with TrackTrack, including subjects that exit and re-enter the scene Optimize multi-camera pipelines for high-throughput, high-resolution inference Deploy Metis across more devices, OSes, and virtual environments Streamline your development workflow with improved toolsMetis M.2 Max: unprecedented performance in an M.2 form factorThe Metis M.2 Max provides an option from the Metis M.2 module, giving you double the memory bandwidth, a lower profile and advanced thermal management features for more demanding, but still compact, edge inference. This version of Voyager features support for the new Metis M.2 Max. The software takes full advantage of the module’s new capabilities to deliver the inference performance of a PCIe card, but in the compact M.2 form factor. This opens up deployment in space- and power-constrained edge devices such as retail kiosks, industrial gateways, and embedded vision systems without compromising performance.The increased memory bandwidth makes Metis M.2 Max particularly suited for running Large Language Models (LLMs) on edge devices. Additionally, the firmware implements closed-loop power control, letting you trade peak compute for a predictable power envelope. Set your limit with axdevice --set-power-limit and the hardware will make sure to stay within your thermal and electrical budget. When AI cores go idle, frequency drops automatically, saving roughly 0.6 Watts across all four cores with zero impact on active workloads.Install with pip, Run AnywhereThis is a big one for developer experience. The SDK is now delivered as standalone Python wheels which are installable via pip on Python 3.10 through 3.13. There are two wheels: axelera-rt for the runtime environment and axelera-devkit for the compilation/development environment.With ManyLinux compliance, these wheels work across multiple Linux distributions including Debian 12 & 13, RHEL 9 & 10, and Yocto-based distributions without needing the Axelera installer script. If you've been waiting to integrate Axelera hardware into your existing custom CI/CD or container workflows, this is your moment!Pipeline Builder API: a pythonic way to build inference pipelinesThe new Pipeline Builder API (currently in Alpha) lets you define entire inference pipelines, from model loading through post-processing and tracking, as composable Python expressions. You don’t need any YAML, or any additional boilerplate. You can chain operators with op.seq(), run branches in parallel with op.par(), and apply per-object processing with op.foreach().Example: Load a model, run inference, display detections, all in the same Python programming unitThe API ships with 30+ operators spanning pre-processing, inference, post-processing, filtering, and tracking. Results come back as typed objects (DetectedObject, PoseObject, SegmentedObject, TrackedObject) with .draw() visualization built in. A pipeline optimizer automatically fuses operator chains into SIMD-accelerated kernels where possible. The entire tensor layer is DLPack-compatible, so data moves zero-copy between the pipeline and PyTorch, JAX or NumPy without leaving device memory. Additionally, you can also package and export pipelines into the new packaging .axe portable format for easy redistribution.Expanded Model ZooVersion 1.6 adds significant coverage across vision tasks:Object Detection: GELAN-S/M/C (the Ultralytics YOLOv9 backbone), Ultralytics YOLO26-X, and the Ultralytics YOLO-NAS S/M/L family from Deci AI with quantization-aware blocks Instance Segmentation: Ultralytics YOLO26-N through Ultralytics YOLO26-X Seg variants Pose Estimation: Ultralytics YOLO26-N through Ultralytics YOLO26-X Pose Oriented Bounding Boxes: Ultralytics YOLO26 OBB variants for rotated object detection Re-Identification: SBS-S50 backbone enabling full Deep-OC-SORT re-identification tracking on Axelera hardwareAll new models ship with ready-to-use YAML pipeline files and pre-compiled downloads via axdownloadmodel.TrackTrack and Advanced Multi-Object TrackingThe tracking stack gets a major upgrade with TrackTrack, a state-of-the-art multi-object tracking algorithm from CVPR 2025 that uses iterative matching with track-aware NMS. It's implemented in C++ with Python bindings and available through both the YAML and Pipeline Builder APIs.Alongside TrackTrack, this release adds Camera Motion Compensation (CMC), which is critical for moving-camera deployments, for (Deep-)OC-SORT and an experimental Memory Bank feature that lets the tracker restore a person's ID after they leave and re-enter the scene.Multi-Stream Tiling and OpenCL AccelerationFor multi-camera deployments, tiling pipelines now support multiple camera sources simultaneously, with per-stream tiling configurations via the tile[...]:source syntax. New tools (tile_config.py, camera_scan.py) automate pipeline generation and camera setup.On the performance side, face alignment, color conversion, polar transforms, and region of interest cropping are now OpenCL-accelerated, and DMA buffer passthrough on ARM eliminates memory copies, particularly valuable on the Metis Compute Board where camera and display share DMA buffers.Broader Platform and Virtualization SupportBeyond the new operating system support already mentioned, version 1.6 adds:Yocto integration via meta-axelera and build sources for the Metis Compute Board KVM PCIe passthrough (currently in Beta) to pass Metis devices into VMs with the full runtime stack running inside the guest New validated hardware platforms including the Dell Pro Slim Plus XE5 and AsRock NUC Box-125 PyTorch 2.7–2.10 support in the compilation environmentDeveloper Tooling ImprovementsAs we continue to improve the developer experience and tooling, the following updates were made:TOML compiler configs replace JSON as the default as they are more readable and more editable (JSON still works if you prefer it.) axcompile is the new CLI entry point, replacing python -m axelera.compiler.compile axdownloadmedia fetches test videos and images from cloud storage for benchmarking and experimentation axdevice driver --install automates PCIe driver setup on Debian systems axmonitor now shows DDR bandwidth plots and extended power measurements, making it easier to identify whether workloads are memory-bound or compute-bound The PCIe Linux driver source is publicly available under a GPLv2 license, so you can build the kernel module yourselfTry it outVoyager SDK v1.6 is available now: # Installation (on Linux)git clone https://github.com/axelera-ai-hub/voyager-sdk.gitcd voyager-sdk./install.sh --all --YES --media# Run a Computer Vision application using Yolov8axdownloadmodel yolov8l-coco./inference.py yolov8l-coco media/traffic1_1080p.mp4# Run an LLM chatbot using Llama3.2axllm llama-3-2-1b-1024-4core-static --prompt "Tell me a joke"For the full release notes, documentation, and technical support, visit the Axelera AI Customer Portal.We'd love to hear what you build with it! Comment below or share more about your project in Axelera’s Community.

Related products:AI Software

TutorialApr 14, 2026

The Voyager Pipeline Builder API: Inference Pipelines as Python Expressions

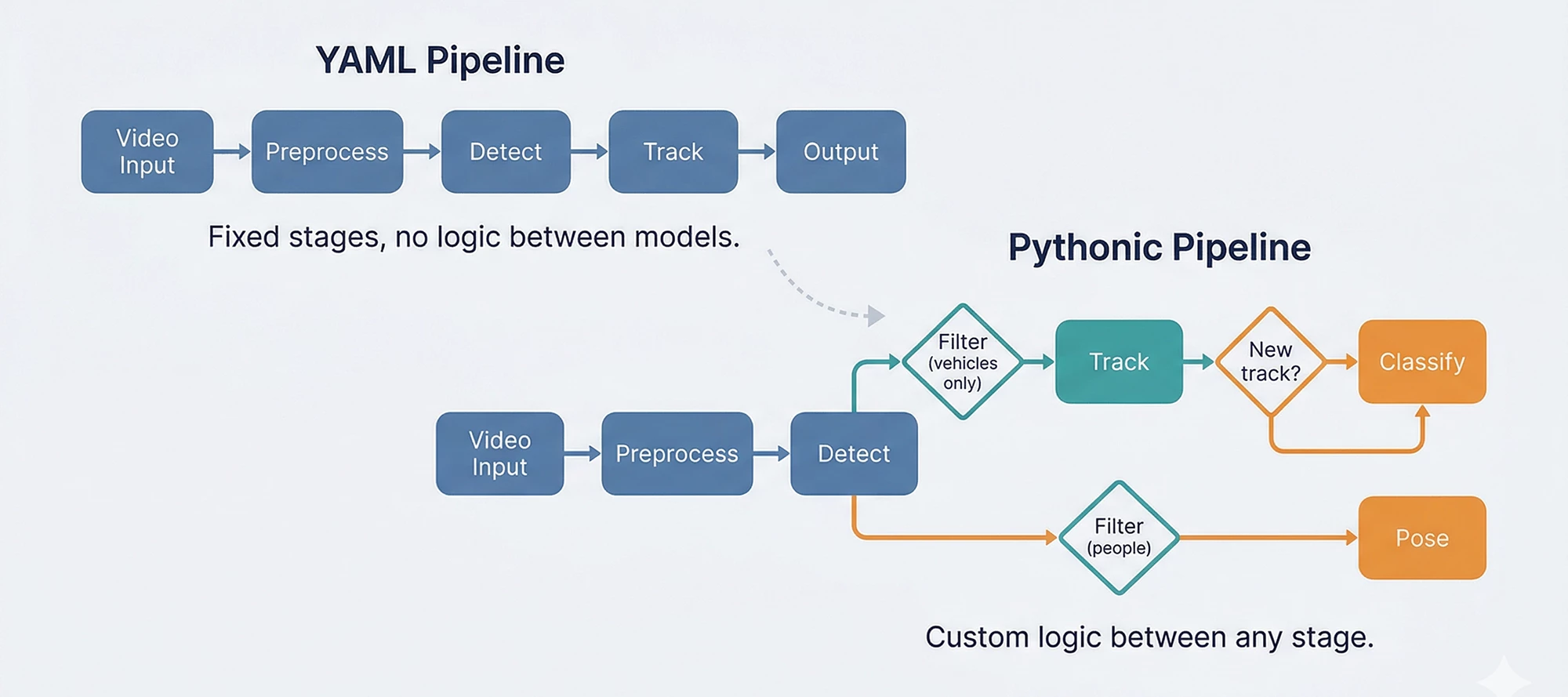

We made a deliberate bet when we built the Voyager® Software Development Kit's (SDK) pipeline builder: YAML-described pipelines, not code. A single YAML file would define everything from video input to model inference to postprocessing. The SDK would handle GStreamer orchestration, multi-stream management, image preprocessing like camera distortion correction, color conversion, and hardware dispatch. Application engineers could deploy detection and tracking pipelines without writing inference code at all.That bet paid off. Production security systems, traffic analytics, and retail deployments run on YAML pipelines today. A detection-with-tracking pipeline looks like this:pipeline: - detections: model_name: yolo26s preprocess: - letterbox: width: 640 height: 640 - torch-totensor: postprocess: - decodeyolo10: conf_threshold: 0.4 - tracker: model_name: oc_sort cv_process: - tracker: algorithm: oc-sort bbox_task_name: detectionsAnd the application code to consume it:stream = create_inference_stream(network="yolo26s-coco-tracker", sources=["camera.mp4"])for frame_result in stream: for obj in frame_result.tracker: print(f"{obj.label.name} {obj.track_id}")The approach is compact, declarative, and fast. For standard detect-and-track workflows, this delivered on two of the three things edge AI developers need: performance and ease of use. The third, flexibility, is where the story gets interesting.Then users started building things we didn't plan forA customer needed to detect vehicles, track them, and run a secondary classifier only on newly appeared tracks entering a specific zone. Another wanted to split detections by class, run different models on each subset, and merge the results with custom business logic. A third wanted to prototype cascade pipelines in a Jupyter notebook before deploying to production.The YAML pipeline can support all of these, and we have customers in production using such capabilities, but each new inter-stage pattern requires C++ and Python development to enable it. YAML cascades work through predefined reference patterns (source: roi, where: task_name) that connect stages together declaratively. Adding custom logic between stages, such as: "only classify if the track is new" or "skip this model if the confidence is below X and the object is in zone B," means building new C++ components and Python wrappers for each specific case. Voyager’s foundational runtime objects (InferenceStream and AxInferenceNet) are extensible to support inter-stage, but the development cost scales with every new pattern.There was also a subtler friction: ML engineers prototype in PyTorch and NumPy. They think in tensors and function calls, not YAML keys. Asking them to translate a working Python prototype into YAML configuration added a step that slowed iteration without adding value.The YAML abstraction was right for deployment. It wasn't fast enough for development iteration.In YAML, custom logic between stages requires C++ and Python development. The Pythonic builder puts it in your hands.What if the pipeline IS the code?That question led to the Pythonic Pipeline Builder — an experimental API where pipelines are composed in Python, not described in YAML. The same detection pipeline, in code:from axelera.runtime import oppipeline = op.seq( op.letterbox(640, 640), op.totensor(), op.load('yolov8n-coco.axm'), op.decode_detections(algo='yolov8', num_classes=80), op.nms(), op.to_image_space(), op.axdetection(class_id_type=op.CocoClasses),)detections = pipeline(image)Each operator does one thing. op.seq chains them. The pipeline is a callable. This isn't a wrapper around the YAML system; it's a separate runtime that gives direct access to the same optimized C/C++ operators, with the flexibility to compose them however the use case requires.But the real point isn't the detection pipeline. That works fine in YAML too. The point is what happens when you need to go beyond it.The moment it pays offHere's the vehicle-tracking scenario in Python. Detect vehicles, filter by class, track them, classify only newly appeared tracks. The kind of inter-stage logic that would normally require dedicated C++ development:from axelera.runtime import opdetect = op.seq( op.colorconvert('BGR', 'RGB'), op.letterbox(640, 640), op.totensor(), op.load('yolov8n-coco.axm'), op.decode_detections(algo='yolov8', num_classes=80), op.nms(), op.to_image_space(), op.axdetection(class_id_type=op.CocoClasses), op.filter(class_ids=[op.CocoClasses.car, op.CocoClasses.truck, op.CocoClasses.bus]),)tracker = op.tracker(algo='bytetrack', return_all_states=True)classify = op.seq( op.croproi(property='bbox'), op.resize(size=256, half_pixel_centers=True), op.centercrop((224, 224)), op.totensor(), op.normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]), op.load('vehicle-type-classifier.axm'), op.axclassification(), op.topk(k=1),)for frame in video: with op.frame_context(frame): detections = detect(frame) tracked = tracker(detections) for obj in tracked: if obj.state.name == 'new': label = classify(frame, obj.tracked) print(f"New vehicle {obj.track_id}: {label}")The filter, tracker, and classifier are separate pieces that compose freely. The "only classify new tracks" logic is a Python if statement, not a C++ component, callback library, or feature request. When the business rule changes (classify lost tracks too, or skip trucks, or add a second classifier for color), the change is a line of Python, not a development cycle.You don't have to choose oneDuring this experimental period, the most practical path for many teams is a hybrid: keep YAML for what it already does well, and hand off to Python where flexibility matters.Concretely: a YAML pipeline defines the top-level models (detection, pose, segmentation) without cascading. InferenceStream handles video acquisition, image preprocessing, multi-stream management, and primary inference at full GStreamer-optimized throughput. Then in your application code, Pythonic operators take the detection results and run tracking, filtering, secondary models, and business logic, all in Python.This isn't a migration. It's a bridge. Teams keep their existing YAML pipelines and add Python where the development cost of enabling new patterns in YAML outweighs writing them directly. As the Pythonic builder matures, more of the pipeline can shift over incrementally.What's ready and what's notThe experimental version of the Pythonic Pipeline Builder ships with Voyager SDK 1.6. We want to be clear about where it stands.What works today: The operator API (op.seq, op.filter, op.tracker, op.foreach, custom operators), detection/classification/pose/segmentation pipelines, and four tracking algorithms. Models compile through the Ultralytics integration or the compiler API for any ONNX/PyTorch model. Getting started and pipeline overview docs ship with the SDK.What's not ready yet: The optimized fused kernels that give YAML pipelines their peak throughput haven't been ported to the Pythonic builder yet. Each release will close this gap. The YAML and Pythonic paths also use different model compilation workflows today. The next beta will unify these so a model compiled once works with both. And a new video orchestration system is in development to replace the GStreamer dependency with something more flexible.The goal has always been all three: performance, ease of use, and flexibility. The YAML builder delivered the first two. The Pythonic builder is how we add the third, without giving up what already works.Which way do you lean?This direction is shaped by how developers actually use the SDK. Do you prefer YAML for its simplicity? Is the Pythonic API closer to how you think about pipelines? Would a hybrid fit your workflow best?Let us know in the comments. Your input directly shapes what we build next.

Related products:AI Software

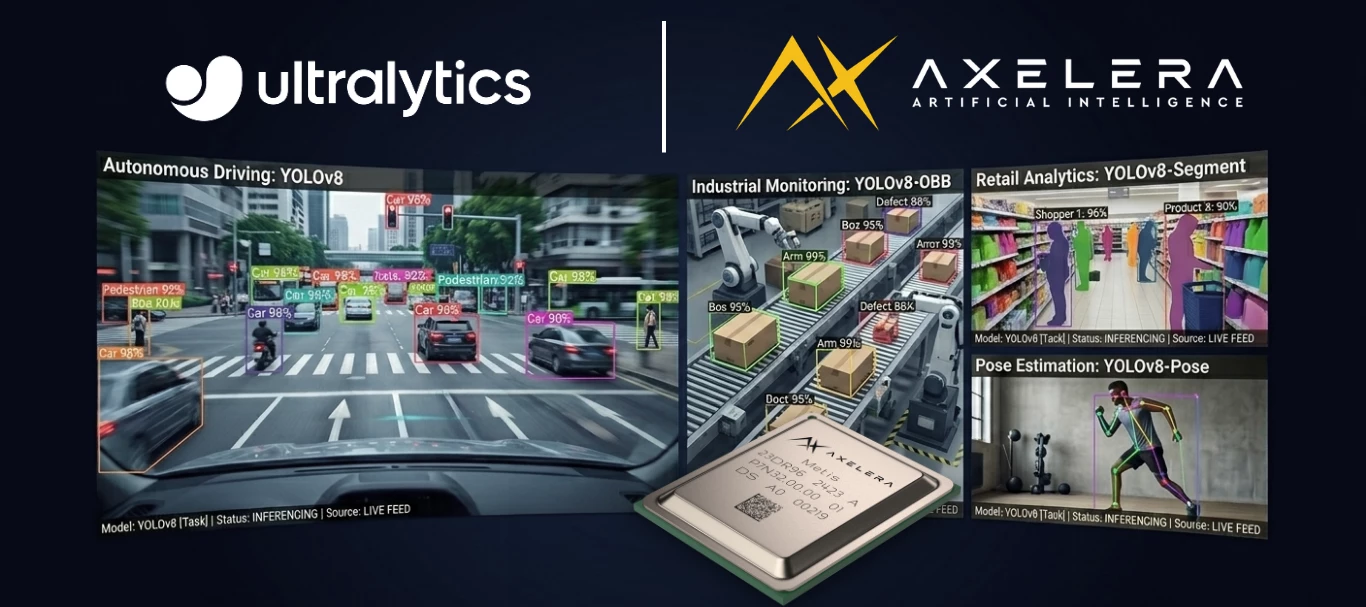

BlogApr 8, 2026

Run Ultralytics YOLO on Axelera AIPUs in Minutes

Trying new AI hardware typically means weeks of integration work before you can tell if the hardware is even worth it. There’s a new compiler, new runtime, and new preprocessing quirks. By the time you've ported your model and built the surrounding pipeline, you've invested a quarter and still don't know if the accuracy holds up.The Ultralytics and Axelera® AI integration removes that barrier. If you train with Ultralytics, you can export to Axelera’s Metis® AIPU, validate accuracy, and run a working application, all without leaving the tools you already know. Once you’re running on Metis with the Voyager® SDK, you can tap into the high performance and low power needed to solve your edge AI inferencing challenges. With four independently programmable cores, you can run models in parallel, easily build an end-to-end pipeline, implement adaptive tiling for high resolution inference, and scale your hardware without reworking your pipeline configuration. Export: One Commandfrom ultralytics import YOLOmodel = YOLO("yolo26n.pt")model.export(format="axelera")That's it. The export compiles and quantizes your model to int8, producing an .axm file optimized for the Metis AIPU. No retraining. No architecture changes. No hardware expertise required to get started.The integration supports detection, pose estimation, instance segmentation, oriented bounding box (OBB), and image classification across Ultralytics YOLOv8, Ultralytics YOLO11, and Ultralytics YOLO26 models. If you've trained it with Ultralytics, it exports.Validate: Know Before You CommitAccuracy is the first question every machine learning (ML) engineer asks when evaluating new hardware, especially hardware that quantizes to int8. And historically, answering that question has been painful. Most vendors publish model zoo benchmarks, but those benchmarks use the vendor's own validation pipeline, which may not match your training framework's baseline. The comparison isn't apple-to-apple. And it's not your model; it's a reference model with reference weights. You still don't know if your specific model retains accuracy on that hardware.The Ultralytics integration changes this. You validate with the same yolo val you already trust:yolo val model=yolo26n_axelera_model data=coco.yamlyolo predict model=yolo26n_axelera_model source=test_image.jpgTwo checks, both using tools you already know:Quantitative: yolo val runs your validation or test set and gives you mAP numbers for the Metis-compiled int8 model compared directly against the same baseline you trained with. No separate tooling is required and it doesn’t use proxy benchmarks. Qualitative: yolo predict runs inference on your own test images so you can visually inspect the results. Does it look right on your data?Metis is designed for high-accuracy int8 inference. The hardware and compiler use mixed-precision techniques under the hood to preserve accuracy where it matters most, while keeping int8 throughput. You can verify the results yourself in minutes rather than taking our word for it.The takeaway: you can evaluate the Metis AIPU for your use case in an afternoon instead of a quarter. If the accuracy numbers work, then keep going. If they don't, you've lost hours, not months and you can focus on optimizing for your use case.Build: From Export to ApplicationYou have a compiled model. Now how much code does it take to build a real application around it?This is one of the hello-world examples that ship with Ultralytics. It includes pose estimation with multi-object tracking, and it’s ready to run:from axelera.runtime import oppipeline = op.seq( op.colorconvert("RGB", src="BGR"), op.letterbox(640, 640), op.totensor(), op.load("yolo26n-pose.axm"), ConfidenceFilter(threshold=0.25), op.to_image_space(keypoint_cols=range(6, 57, 3)), op.tracker(algo="tracktrack"),).optimized()for frame in video: tracked_poses = pipeline(frame) for pose in tracked_poses: draw_skeleton(frame, pose)That's the entire pipeline: preprocessing, inference, postprocessing, and tracking in just 15 lines of Python. You don't need to be a deployment expert. Voyager SDK's Pythonic pipeline builder handles the low-level orchestration, so all you need to do is describe what happens at each stage.For production, the pipeline builder runtime (axelera-rt) is lightweight. It doesn’t require PyTorch, CUDA, or a heavy ML training stack. It has the runtime your application needs for edge AI. When you move from evaluation to deployment, you drop the training dependencies entirely.Voyager SDK includes both a YAML-based pipeline builder for production deployments and this newer Python API. The Python path is the natural fit for ML engineers coming from Ultralytics because it reads like the model pipeline you would sketch on a whiteboard.Each operator does one thing:op.seq chains them .optimized() fuses adjacent operations for speed the tracker adds persistent identity across frames (switch algorithms by changing a string) ConfidenceFilter is a custom operator (you subclass op.Operator, and then write a __call__ method).The pipeline isn't a black box, so you can insert your own logic anywhere in the chain.What Production Looks Like at ScaleThe same pipeline patterns you just saw scale far beyond a single camera.At ISC West 2026, we ran a live demo processing two 8K camera feeds and one 4K feed simultaneously on three Metis 4-chip PCIe cards. The system ran 48 parallel model instances covering person detection, pose estimation, face recognition, weapon detection, and PPE identification, all in real time.This is a developer blueprint for what production edge AI looks like at scale. It runs on the same Voyager SDK and Metis AIPUs available today. The hardware scales from a single-chip M.2 module to a four-chip PCIe card, but your pipeline code stays the same. When Axelera's next-generation Europa AIPU ships, it runs on the same SDK.Read the full technical breakdown in the ISC West 2026 blog post.Why Metis Is Built for ThisRunning demanding workloads at the edge requires hardware engineered for exactly that. Metis is built on Digital In-Memory Compute (D-IMC) architecture, which brings computation directly to where the data lives rather than moving data to a separate compute unit. The result is more performance per watt, which matters when you are deploying to edge environments where power budgets are real constraints. Four independently programmable cores give you the flexibility to run four different models in parallel, or to mix and match single-model and cascaded configurations depending on what your application needs.Voyager SDK is designed to meet you where you are: a clean Python API and YAML pipeline builder for straightforward deployments, and lower-level access for developers who want to go deeper. Features like adaptive tiling let you run accurate inference across multiple 8K video streams without retraining your models. The same SDK that runs on a compact M.2 module scales to enterprise and edge server deployments without changing your pipeline code.One platform. Infinite possibilities.Get Started Follow the Ultralytics Axelera integration guide for setup (firmware, SDK installation, export, and validation) Run the hello-world examples (pose tracking and instance segmentation, ready out of the box) Explore the Voyager SDK on GitHub for the full model zoo, pipeline examples, and documentationTell us what you're building. We'd love to hear in the comments.

Related products:AI SoftwareAI AcceleratorsAxelera Community

BlogMar 31, 2026

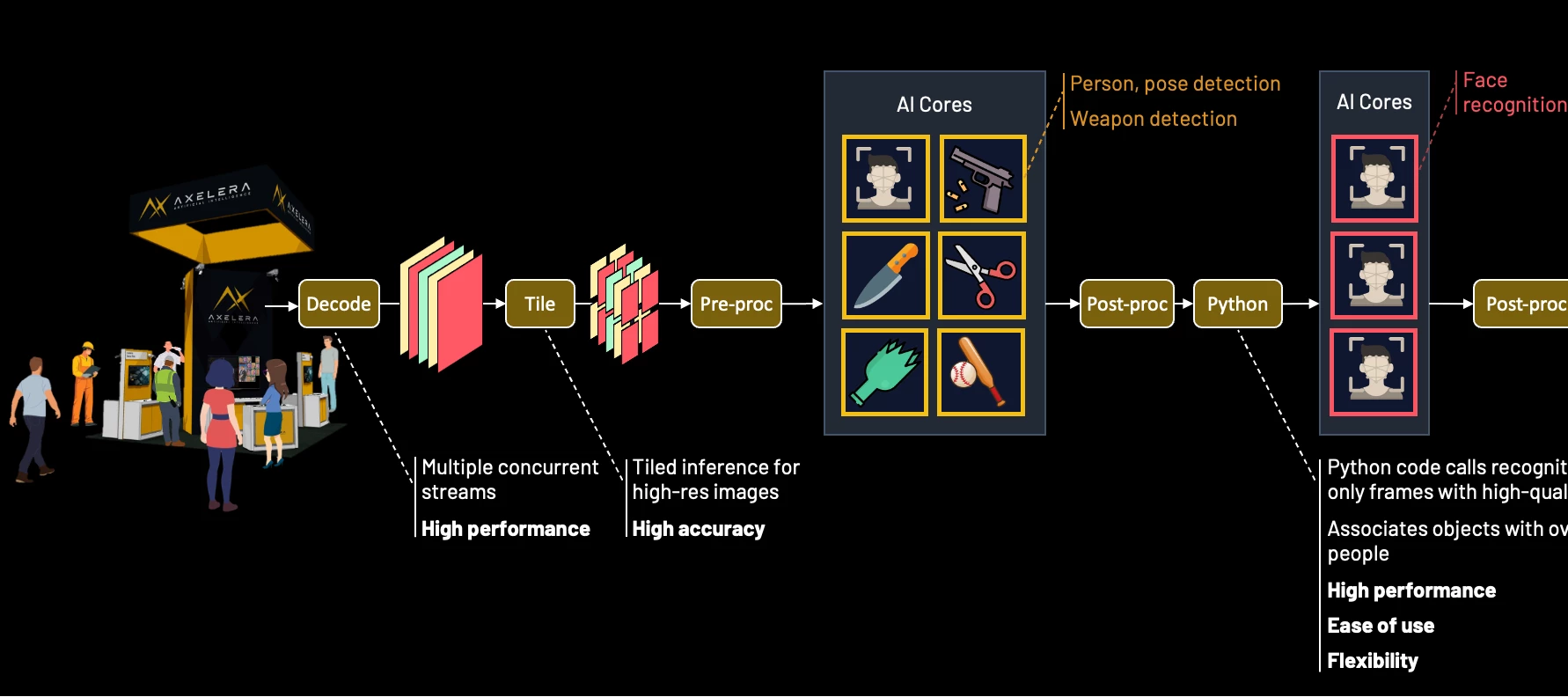

Scaling Edge AI for the Enterprise: Building the Ultimate Multi-Model, Multi-Stream Security System

This piece covers the how. If you want the why, our companion article makes the business case for edge AI in physical security.At a Glance The Achievement: Real-time AI person-of-interest (POI) identification and threat detection across multiple 8K streams at 2.5 PetaOPS The Stack: Voyager SDK + Axelera Metis + Intel Xeon The Future: 3x performance leap with the next-gen Europa architecture Nothing matches the energy of ISC West for showcasing what we’ve been building. As we move between major industry events, we’re constantly hearing from enterprise leaders that the 'pilot phase' of Edge AI is truly over. The challenge now is scale. It’s no longer about running a single model on a single stream. It’s about the massive task of orchestrating dozens of AI models across multiple video feeds at commercially viable costs.Returning to this show in 2026 with our most ambitious interactive experience yet, is a turning point. It provides the perfect backdrop to demonstrate exactly how we’re pushing the limits of real-time, multi-model, and high-resolution multi-stream security.Last year we demonstrated a pioneering approach to 8k AI inferencing. Today, we’re expanding the Voyager SDK with safety-focused capabilities, including person-of-interest identification and an alerting and visualisation framework. These tools are designed to improve operator response times, particularly when potential weapons are detected on the show floor. In collaboration with four ISV partners, we’ve developed a security demonstration showing how easily customers can train, deploy, and integrate custom models into our end-to-end software pipelines to deliver plug-and-play AI solutions at scale. This showcase highlights the maturity of the Voyager SDK.If you are ready to build something like this yourself, here is where it gets interesting.Production Pipelines for Scalable Real-World SystemsWhen you build on Axelera's Metis AIPUs and the Voyager SDK, you gain a complete set of capabilities for orchestrating high-concurrency, multi-stream AI workloads out of the box. Here is what developers can tap into: Hardware-accelerated decoding: Ingest and decode multiple 4K and 8K video streams simultaneously to maintain low latency and high throughput Tiling-based pre-processing: Subdivide high-resolution streams into overlapping tiles to ensure the AI detects small objects with high precision, while applying perspective transformations to normalize different camera angles Concurrent analytics: Run multiple models in parallel to detect and track individuals, face landmarks, and objects of interest simultaneously Model cascading: Pass detector outputs to secondary models; for example, output regions of interest from a face detector to a recognition model Custom pipeline logic: Integrate user-defined code; for example, implement conditional logic to select specific frames or regions of interest to pass to secondary models Intelligent edge orchestration: Optimise bandwidth by sending only critical metadata/events to the cloud while retaining raw high-resolution footage for local forensic storage New to the Voyager SDKSupport for custom C++ and Python logic within the pipeline, providing the architectural flexibility demanded by modern, high-performance applications.Security person-of-interest pipelineReal-Time Person-of-Interest IdentificationThe ChallengeReal-world environments are far from ideal for identifying and tracking people of interest. The subject moves through crowds and around objects that obscure the view of them and they may actively avoid cameras, looking away or moving through dense crowds. Systems must also contend with challenging conditions such as poor lighting, motion blur, and varied facial angles.Facial recognition models are highly sensitive to the quality of input. Indiscriminately processing blurry, angled, or partially occluded faces increases false rejections (failing to identify a known subject) and false identifications (incorrect matches), all while wasting valuable AI processing cycles.The SolutionA shift is necessary from frame-by-frame recognition to more nuanced temporal processing. By inserting a tracker after the detector, developers can identify the same individual across multiple frames and construct a pose-quality buffer for each tracker ID. Combined with conditional logic, this allows the system to filter for only the highest-quality detector crops, preserving processing power while improving accuracy.As a tracked person moves through a scene, their associated pose-quality buffer is populated with the best available regions of interest based on metrics such as pose angle, pixel density, and illumination. Each new region of interest replaces the weakest frame in the buffer only if its score is higher. Over time, poor angles and blurs are filtered out, ensuring only the most reliable data reaches the recognition model.Anchor and UpdateThe first high-quality region of interest detected can be immediately cascaded to the recognition model, allowing the system to make a primary identification while the pose-quality buffer populates. Once the buffer reaches a defined threshold, the system performs batch processing to refine the result. Instead of treating each match in isolation, the recognition outputs are combined using a Bayesian update to produce a cumulative confidence score. This effectively amplifies multiple lower-confidence matches into a single, high-certainty identification.This process treats each new region of interest as a multiplier of existing evidence. Mathematically, two independent 70% matches provide greater certainty than a single 90% match. This architecture ensures temporal stability and resilience against noise or outliers. Once a high-confidence identity is established through multiple high-quality frames, transient data from a blurry frame or passing occlusion will not overturn the cumulative evidence.Secure the Critical MomentAn override gate ensures that the system identifies subjects who may only appear clearly for a single frame. If an incoming region of interest achieves a high-quality frontal pose and returns a high-confidence match, the identification is considered immediately reliable. This triggers an instant alert and can be configured to supersede the existing buffer of lower-quality data. This mechanism prevents temporal blindness by ensuring that even a fleeting, high-quality glimpse of a subject results in a successful identification.Region-of-interest (ROI) processingThe Axelera Surveillance BlueprintNext, we put the person-of-interest tracker together with real-time weapon detection, designed specifically for multi-model concurrency. This blueprint allows developers to easily extend the system by running a range of analytics models in parallel.To maximise detection reliability, each subject is represented as a detection pair: person and face. By running person and face detection models in parallel on every frame, the system maintains dual-path tracking: faces can be identified even when bodies are occluded by crowds or objects, while individuals can be tracked when their face is not visible. The application dynamically maps overlapping detections to maintain a persistent identity for each subject.The pipeline is configured to prevent frame-drop: if a new frame arrives before all recognition tasks complete, the remaining tasks are asynchronously scheduled across subsequent detections. This allows the system to iteratively scan and resolve an entire scene over time without compromising camera throughput (similar to how a human would process the scene, just much faster).The blueprint is engineered to commit fast and refine slow. It uses the first high-quality match to establish an initial identity with low latency, while maintaining a pose-quality buffer to improve certainty over time. Combined with the override gate, this ensures that even brief, high-quality captures result in a successful identification.To manage these detections, the interface displays all tracked individuals in a grid of high-resolution regions of interest, a crowd view. Utilising the full 33-million-pixel resolution, this enables operators to maintain visibility on distant subjects that would otherwise be lost to downscaling on most monitors. The interface uses bi-directional linking: when you hover over a person in the grid, the system draws a line to their position in the raw feed, and vice versa. At a single toggle, the grid can be configured to show the live view of each person or the best shot from the pose quality buffer.Combined person-of-interest tracking with weapon detectionWeapon DetectionWhile I’d have loved to show a realistic weapon demo, the venue (and the common sense of my colleagues) suggested that bringing firearms onto the show floor was a bad idea. Instead, I opted for a weapon from a more civilised age: a lightsaber.For the weapon detection, I chose the unique curved hilt of Count Dooku, which serves as an ideal target for our demonstration. While a prop, its distinctive geometry is highly representative of tactical batons, bladed weapons, or firearm suppressors. This allows us to demonstrate high-precision detection with 8K native inferencing across a live, multi-camera environment in a way that’s high impact, but zero risk.This image was originally posted to Flickr by Jay Malone. Source: Flickr. It is licensed under the Creative Commons Attribution 2.0 Generic license.The Axelera surveillance blueprint configuration (as demonstrated at ISC West 2026): Person-of-Interest Watchlist: Axelera staff are enrolled as the primary subjects for the person-of-interest identification tracking Weapon Detection: The custom Ultralytics YOLOv8l lightsaber model acts as the weapon detector 8K Camera Setup: Two Axis Q1809-LE 8K IP cameras are positioned at the top of the booth to survey the show floor 8K Display: A 75” 8K monitor displays the two primary feeds (downsampled) on the left and the crowd view grid on the right Focus View Cell: A large area within the grid highlights high-priority weapon alerts. When no alert is active, this space displays a live 4K feed from an internal booth camera for visitor interaction Personal Protective Equipment (PPE) Verification: When the 4K booth camera detects a person in full PPE clothing, the interface illuminates with a green shield to signal compliance Edge-to-Cloud Orchestration: Detections trigger an on-booth alarm while simultaneously pushing automated incident tickets to ServiceNow for remote response The demo’s intelligence is a collaborative effort: Digica provided the face detection and recognition models, while Innowise developed the lightsaber detection model using a blend of Synthera’s synthetic data and real-world imagery. Additionally, SpanIdea contributed a PPE detection model that distinguishes between show attendees and construction workers.Demo Hardware and PerformanceTo perform real-time inference of multiple models across parallel 8K streams, we utilised an ORIGIN L-Class V2 PC equipped with an Intel Xeon W7-3565X 32-core processor and a discrete GPU for the visual pipeline (decoding and 8K rendering).The compute backbone consists of three Axelera 4-chip Metis cards, providing a total of 48 AIPU cores. This configuration delivers peak 2.5 PetaOPS of parallel processing power to handle the high-resolution tiling and model processing required.The system is integrated into the booth infrastructure using a Ubiquiti Switch Pro XG 24 PoE, which provides high-bandwidth data transfer and power to the AXIS 8K cameras.Running the surveillance blueprint at 8K resolution requires significant throughput to maintain real-time responsiveness. The system's performance is defined by the following metrics: Tiling Throughput: The system processes 288 tiles/sec for the fully-configured blueprint. Model Concurrency: Each 4-chip Metis card executes up to 16 model instances in parallel. Total System Capacity: Across three cards, the system runs five primary models and one secondary model across 48 cores. This achieves a combined throughput of at least 1,440 model inferences/sec. This architecture ensures stable inference across all video feeds without thermal throttling or performance degradation. Power Efficiency: Despite this high processing rate, the Metis architecture maintains a highly efficient power profile, with a typical draw of only 30–58W per card. Looking Ahead: The Next Phase of Edge AIFor Axelera, innovation is constant. Our next-generation Europa architecture delivers a 3x performance increase over Metis, integrating on-chip video decoding and vector engines to accelerate preprocessing. This provides the critical AI headroom required by next-generation surveillance systems. Furthermore, the integration of custom Python logic into the Voyager pipeline marks the first step toward our Python-friendly Pipeline Builder API. This grants developers complete freedom to build complex, thread-safe pipelines with high-performance execution, directly delivering on our mission to make AI accessible to everyone.Our growing ecosystem provides customers with an increasing choice of models and capabilities to integrate into their solutions. Axelera blueprints allow developers to 'mix and match' our model zoo with independent software vendor models. This enables autonomous edge-response that moves beyond simple alerting to initiating local, real-time defensive protocols, all while significantly reducing software costs and time-to-market.Axelera uniquely delivers on all three critical requirements: Ease of Use: Rapidly parameterise and customise for a wide range of embedded, desktop, and enterprise-grade hardware. Flexibility: Manage diverse tasks and complex dataflow requirements within a single, modular pipeline. Performance: Voyager SDK handles the low-level heavy lifting, such as multi-stream threading, buffer sharing and synchronisation, across cameras, decoders, the host CPU and Metis hardware. By providing these foundational hardware and software blocks, Axelera AI enables high-performance security at scale, ensuring our customers stay ahead of evolving threats with ease.

Related products:AI SoftwareAI Accelerators

BlogMar 24, 2026

The Future of Security Is Already Running. Here Is What It Looks Like.

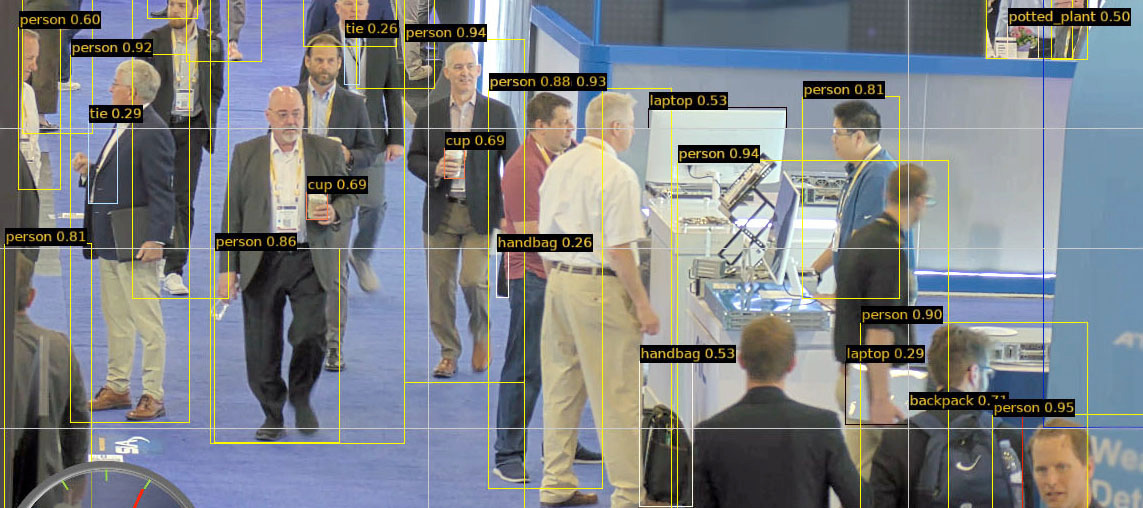

A camera sees everything and understands nothing. For decades, that has been the fundamental limitation of physical security at scale: vast amounts of footage, limited ability to act on it in real time. The gap between what a camera captures and what a security team can actually respond to is where incidents happen. Edge AI closes that gap.At ISC West 2026, we built a working example of exactly that: a live, multi-camera edge AI security system running in a crowded convention space, tracking persons of interest, detecting novel threats in real time, and keeping every frame of footage on-site. Here is what it looks like.The problem is not the cameras. It is what happens after.Modern physical security operations are sitting on a significant and largely untapped asset: high-resolution camera infrastructure that covers more ground, captures more detail, and retains more data than any previous generation of technology. The hardware investment is already made. The feeds are already live.The challenge is that more cameras have not made the security team's job easier. They have made it larger.According to a 2024 survey by MSSP Alert and CyberRisk Alliance, 62% of security alerts are ignored entirely. When every feed is an equal priority, none of them is. Security teams at major airports, sporting venues, and large-scale public events are experienced professionals making triage decisions under pressure, and those are decisions AI should be supporting, not leaving entirely to human judgment.The financial picture compounds this. Legacy approaches to scaling security have been linear and expensive: More cameras means more storage, more bandwidth, more cloud processing costs More data means more staff hours reviewing footage after the fact More cloud processing means more exposure to the governance questions that compliance and legal teams are increasingly being asked to answer And that third point has a way of becoming the most expensive one. Moving video footage and biometric data to the cloud creates regulatory exposure that is tightening globally. Data residency requirements, biometric privacy laws, and AI governance frameworks are evolving faster than most enterprise procurement cycles. Every frame that leaves the building is a question your legal team may eventually have to answer.A note on getting it wrong: The cost of failure in security AI runs in both directions. False positives disrupt operations, damage trust in the system, and create the kind of alert fatigue that leads operators to start ignoring alerts altogether. False negatives are worse. Confidence in the system's outputs, not just its speed, is what separates a tool security teams rely on from one they work around.Why most edge AI platforms ask you to make a trade-offThe embedded vision industry has spent years normalising a problem it has not fully solved. Delivering a capable edge AI platform means satisfying three requirements simultaneously:The embedded vision trade-off most platforms ask you to accept: Performance: the processing power to run multiple AI models across multiple high-resolution streams in real time Ease of use: the ability to integrate with existing infrastructure without a specialist engineering team and a six-month timeline Flexibility: the capacity to extend the system as requirements evolve, add new capabilities, and integrate with the partner ecosystem you have already built Most platforms optimise for two at the expense of the third. Voyager SDK is engineered to deliver all three.Most platforms deliver two. The missing one surfaces during the proof of concept, and the cost of that gap is measured in the months of engineering work it takes to get from a working demo to a production deployment.For example, GStreamer requires specialist expertise that most teams have to hire or train for, adding cost and delay before a project even begins. PyTorch is accessible but underperforms on real edge hardware. Most edge AI vision SDKs work well within their predefined boundaries, and extending them beyond that set is where timelines and budgets tend to slip.The Metis® AI Processing Unit was designed from the ground up for inference at the edge: high parallelism, low power draw, purpose-built for the multi-model, multi-stream workloads that real security environments generate. The Voyager® SDK was built alongside it, not bolted on afterward, which is why the two function as a system rather than as components that happen to be compatible.Critically: this architecture works with existing camera infrastructure. New compute does not mean new cameras.From detection to decision: what it looks like when it works Screenshot of demonstration in action.At ISC West 2026, Axelera® AI is demonstrating exactly what that looks like in practice. Here is what is showing: Three camera streams (two 8K and one 4K) feeding simultaneous live video into a single edge system, running on an ORIGIN L-Class V2 workstation PC with an Intel Xeon W7-3565X 32-core processor Multiple AI models running in parallel across three PCIe cards, each housing four Metis AIPUs Person-of-interest tracking that goes well beyond simple detection: As a subject moves through the crowd, the system continuously builds a profile of who they are, drawing on multiple frames and camera angles over time to improve certainty as better quality images become available The system is constantly improving its view of each subject, holding onto only the best angles and sharpest images as they become available. The system only needs one clear moment. If a subject glances toward a camera even briefly, that single high-quality sighting is enough to trigger an immediate, confirmed identification. Person and face detection run in parallel on every frame, maintaining tracking even when a subject's face is obscured or their back is turned Novel threat detection using a custom-trained model built on synthetic data, to catch what metal detectors cannot. Synthera generated thousands of synthetic training images of a plastic prop weapon using its Chameleon platform, and Innowise used that dataset to train and benchmark the right Ultralytics object detection model for this solution Face detection and recognition powered by Digica, engineered for high-precision accuracy and demographic parity across diverse races, genders, and age groups to ensure reliable identification in any environment PPE detection powered by SpanIdea, identifying safety helmets and high-visibility vests in real time to distinguish between attendees and staff on the show floor Automated incident reporting pushed to remote response systems the moment an alert triggers Four ISV partner models integrated into one pipeline using the Voyager SDK, with no bespoke middleware between them Raw footage never leaves the building. When a confirmed threat triggers a remote response, only the relevant event data is transmitted.Catching a threat is only half the problem. Getting the right information to the right person fast enough to do something about it is the other half.Consider what a large-venue security team actually needs from an AI system during a live event with tens of thousands of people moving through the space. The system needs to maintain awareness across the entire environment simultaneously, surface only the detections that warrant human attention, and give the operator enough context to act immediately rather than investigate.Here is how that plays out in practice: A subject enters the environment. Tracking begins across every subject as they move between all cameras in the venue. As the subject moves through the space, the system continuously builds a profile of who they are, drawing on multiple frames and camera angles over time to improve certainty as better quality images become available. An operator is notified when the system achieves a reasonable confidence that the profile matches a person of interest (or person on their watchlist). The alert arrives with context: a high-resolution crop of the subject, their position in the live feed, and a clear confidence indicator. If a weapon or object of concern is detected, the alert escalates immediately, triggering a visible and audible alarm at the security station and simultaneously pushing an automated incident report to remote response systems for follow-up. The system commits fast and refines over time. An initial identification is made as soon as the evidence supports it, and the confidence picture continues to improve as additional data becomes available. A single poor-quality frame does not define the result. Neither does a momentary occlusion.The non-metallic threat problem. One capability worth calling out: the threat landscape for large venues has evolved beyond what traditional metal detectors were designed to catch. Composite materials, plastic-based weapons, and purpose-built items can evade conventional screening entirely. AI-based detection can be trained for these threat categories before they are encountered in the field, using synthetic data to build detection capability ahead of real-world exposure. With synthetic training data, the system can be trained to detect novel weapons from their 3D CAD designs, before a physical version ever exists. That is a meaningfully different level of preparedness.The platform is only as capable as what can be built on itMost enterprise security teams already have specialised tools they depend on and ISV partners they trust. The question is never whether to use them. It is whether the platform can bring them all together.At ISC West 2026, we built a live demonstration with four ISV partners, each integrating their own specialist capabilities into a single working system using the Voyager SDK: Partner Capability Synthera Synthetic training data generation for novel and non-metallic threat categories Innowise Trained multiple Ultralytics models using a combination of synthetic and real-world data, then benchmarked to identify the model delivering the best performance-accuracy tradeoff on Metis hardware Digica Face detection and recognition engineered for demographic parity across diverse populations SpanIdea PPE detection for real-time classification of individuals by role and compliance status None of these integrations required bespoke middleware. Each partner used the same modular pipeline framework. The result is composable: capabilities from different partners work together in a single pipeline, and new integrations add to the foundation without displacing what is already there.For a buyer making a multi-year infrastructure decision, this matters as much as any hardware specification. Requirements evolve. New threat categories emerge. Regulatory expectations shift. A platform that extends through a growing ecosystem of partners means the initial investment does not become technical debt when any of those things happen.The bottom lineHere is how this demonstration addresses the issues we started with.On cost and infrastructure: In this demonstration, three PCIe cards, each housing four Metis AIPUs, are deployed. Because each AIPU contains four independently programmable cores, the configuration delivers a total capability of 48 parallel AI model instances at a typical power draw of 30 to 58 watts per card. Compared to GPU-based alternatives, the difference in power consumption, cooling requirements, and operational cost at scale is substantial. Edge processing also reduces the bandwidth and cloud compute costs that make legacy approaches increasingly expensive to operate as deployments grow.On governance and compliance: Raw high-resolution footage stays local. Only actionable metadata and event notifications move to the cloud or to remote response systems. The architecture is the compliance answer: not a policy statement that sits alongside the technology, but a structural property of how the system processes and moves data.On the security team: The system is designed to reduce alert fatigue by surfacing high-confidence, context-rich notifications rather than generating volume. Operators receive the information they need to make a decision, not a queue of detections to work through. The job does not get smaller. It gets manageable.The physical security challenge is universal, and our blueprint for addressing it is already scaling. From the retail floor to the front lines of defence, we are bringing precision-engineered edge AI performance to the industries that need it most.

Related products:AI SoftwareAI Accelerators

BlogFeb 9, 2026

Evolving Space Autonomy: Axelera AI and AIKO Join Forces to Advance Onboard Intelligence

A new collaboration combines a decade of heritage in autonomous space systems, with AI’s cutting-edge processor technology, optimizing the next gen of intelligent space infrastructure. Space exploration has always pushed the boundaries of human innovation. Today, as missions multiply and constellations become more complex, the demand for systems capable of autonomous reasoning and real-time adaptation continues to rise.The answer lies in bringing artificial intelligence directly to space, and that's exactly why Axelera AI and AIKO are partnering to push onboard intelligence further, bringing together advanced software and processor technologies designed for the extreme conditions of space.The Unique Challenge of Space AIOperating AI in space isn't just about adapting terrestrial technology. The environment beyond Earth's atmosphere presents extraordinary challenges: extreme radiation that can corrupt computations, severe power constraints where every watt matters, and the impossibility of physical maintenance or updates. Most critically, windows of contact with Earth are limited, making continuous exchanges costly and highly resource-intensive; each communication requires careful planning. Spacecraft must therefore process data and make critical decisions locally, identifying anomalies, reacting to environmental changes, and maintaining operations independently.“In space, timing is everything,” explains Lorenzo Feruglio, CEO of AIKO “When communication windows are short, autonomy isn’t optional: it’s what keeps missions efficient and resilient.”The Growing Urgency of Satellite AutonomyCloser to home, Earth's orbital environment is becoming increasingly crowded. According to the European Space Agency, approximately 15,860 satellites currently orbit our planet, with about 12,900 still active. This congestion is only accelerating. Goldman Sachs estimates the satellite market will grow sevenfold over the next five years, with 70,000 low Earth orbit satellites expected to launch during that period.AI inferencing enables on-board decision making for selective communications when bandwidth is criticalThis explosive growth creates two critical challenges. First, satellites must navigate autonomously through an orbital freeway crowded with other spacecraft, rockets, and debris. Only onboard AI can execute the split-second maneuvers needed to avoid collisions that would create thousands of dangerous fragments. Second, these satellites generate massive volumes of data. Today, Earth observation satellites transmit raw imagery to ground stations where humans or AI datacenters search for critical information like emerging wildfires, severe weather patterns, or agricultural intelligence. By embedding AI inference directly into satellites, spacecraft can analyze data onboard and transmit actionable intelligence immediately rather than terabytes of raw images. A satellite detecting a wildfire's heat signature can alert authorities with precise coordinates while the fire is still containable, rather than waiting for ground analysis.A Partnership Built on Complementary StrengthsWith over ten years of experience developing AI-driven autonomy for satellites and spacecraft, AIKO has built flight-proven software that enables onboard decision-making across multiple missions. Its processor-ready architecture is designed to maximize performance while respecting the demanding power and safety requirements of space operations.Axelera AI contributes state-of-the-art processor technology designed for exceptional performance per watt - a critical metric for space applications. Their processors enable real-time AI inference at the edge, processing massive amounts of data where bandwidth back to Earth is precious and limited.This collaboration represents more than just a technology integration. Together, the two companies create a stronger foundation for in-orbit autonomy: AIKO’s heritage and flight-proven software stack integrated with Axelera AI’s processors precision-engineered to sustain advanced inference in constrained environments.AI for on-board analysis and navigation improves ROI, efficiency, and resource allocationTransforming Space OperationsThis collaboration aims to accelerate the adoption of intelligent, self-reliant spacecraft, capable of processing data onboard, prioritizing insights, and optimizing their missions without waiting for ground intervention.From autonomous maneuvering and adaptive scheduling to real-time data analysis, onboard AI enhances mission reliability, reduces latency, and makes satellite operations more scalable than ever before.Crucially, this partnership lays the groundwork for chip-plus-software solutions ready for space qualification, bridging the gap between Earth-based AI capabilities and the operational realities of orbit.Autonomous Mission Adaptation: Satellites equipped with onboard AI can modify their operations in real-time based on observations, weather patterns, or unexpected events – without waiting for ground control intervention.Efficient Data Processing: Instead of transmitting raw data back to Earth for analysis, spacecraft can process information onboard, sending only the most relevant insights. This dramatically reduces bandwidth requirements and accelerates decision-making.Enhanced Reliability: AI-powered predictive maintenance can identify potential system issues before they become critical, enabling proactive responses that extend mission lifespans.Scientific Discovery: Autonomous systems can identify and investigate unexpected phenomena immediately, rather than waiting for human analysis that might come too late to capture fleeting events.Looking AheadAs we stand on the cusp of a new era in space exploration – with planned missions to Mars, expanding lunar presence, and growing satellite constellations – the need for intelligent, autonomous space systems has never been greater. The partnership between Axelera AI and AIKO represents a significant step forward in making these ambitions reality.By combining AIKO's processor-ready software solutions with Axelera AI's cutting-edge hardware designed for the extreme conditions of space, this collaboration is actively deploying the technologies that will define how future satellites and spacecraft operate.The next generation of space missions won't just carry AI as an experiment – they'll depend on it as a critical capability for success. Through partnerships like this, the European space industry is positioning itself at the forefront of this transformation, ensuring that as humanity reaches further into space, our machines can think, adapt, and explore alongside us.

Related products:AI Accelerators

BlogJan 15, 2026

CES 2026: From AI Hype to Inference Reality at the Edge

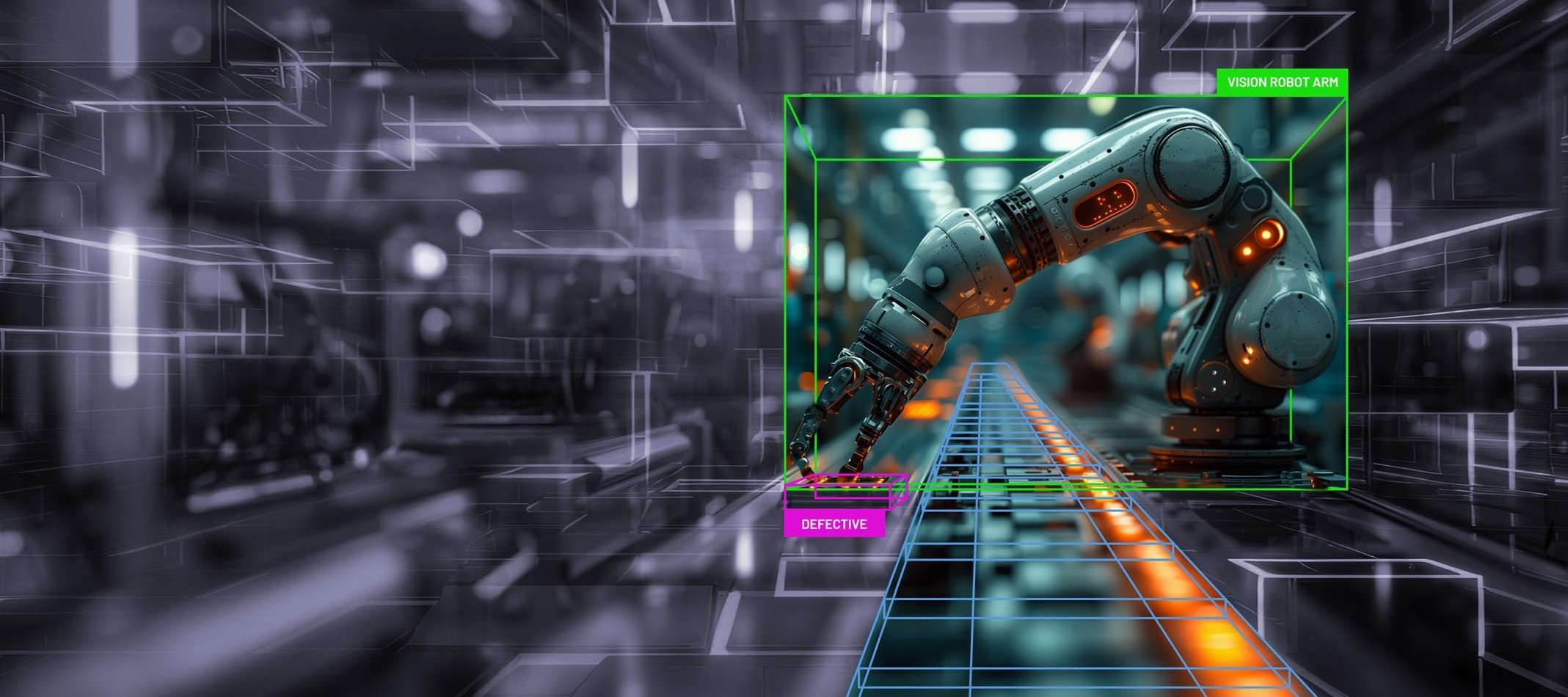

CES has always been a bellwether for where technology wants to go. CES 2026 felt different. This year wasn’t defined by a single breakthrough announcement or the unveiling of a bigger, shinier model. Instead, it marked a quieter, but more important shift in tone.AI didn’t get bigger at CES. It got more real.Across keynotes, booths, and conversations, the focus moved away from who can train the largest model and toward a harder set of questions: How do you run AI reliably? Where does inference actually happen? And what does it take to deploy AI systems outside of a controlled demo?From Training Obsession to Inference AccountabilityThe most notable pivot at CES wasn’t a rejection of training, but instead it was an acceptance that inference is now the bottleneck.Training remains the domain of a small number of hyperscalers and frontier labs. But inference is where AI meets reality: power budgets, latency constraints, connectivity gaps, and cost ceilings. This is where architectural decisions start to matter more than peak theoretical performance.The economics tell the story. A model trained once can be deployed millions of times. Every inference event carries a cost in compute, power, and infrastructure. When you're processing video streams 24/7, analyzing sensor data in real time, or running vision models on battery-powered devices, efficiency stops being a nice-to-have. It becomes the entire business case.At CES, conversations increasingly centered on:Predictable inference cost Power efficiency and thermal envelopes Deployment complexity Offline and near-edge operationThis isn’t a shift away from training, but a decoupling of roles. Training remains the workload that creates models and capabilities. Inference underpins how those capabilities are applied, embedded, and scaled across real systems. It becomes a tool that improves and accelerates every workload, from databases and video analytics to robotics and industrial automation. In that sense, inference is no longer an afterthought. It is the defining challenge for turning AI into something usable.That’s the challenge Axelera AI was made for. At our CES suite, we demonstrated exactly what efficient inference looks like in practice: a 4-chip PCIe card capable of running up to 16 concurrent AI models processing 8K video on a single edge device. Pose detection, face recognition, and segmentation running simultaneously without thermal throttling or performance degradation.Edge AI is Here, and it’s Demanding DefinitionsAnother clear theme was the broad push by chip makers into edge AI. On the surface, this looks like diversification. Underneath, it reflects something deeper: constraints force honesty.Edge environments don’t allow for vague promises. They expose the gaps between marketing claims and deployable systems. At CES, “edge AI” was used to describe everything from embedded vision systems to rackmounted servers branded as edge appliances.That ambiguity matters because edge AI isn't just about location. It's about operating under real-world constraints that datacenter AI never faces. True edge deployments must handle thermal challenges in industrial settings, operate reliably without constant connectivity, and deliver consistent performance on limited power budgets.True edge AI raises hard questions that expose architectural choices:Can models run offline? What host system is required, and how heavy is it? How much power does inference actually consume? How easily can developers port existing models?CES made it clear that edge AI hasn’t just arrived, it’s demanding clearer definitions and greater accountability.Physical AI: Vision, Belief, and Skepticism“Physical AI” emerged as the phrase of the week, often used to describe robotics, vision-guided systems, and realtime perception. The excitement is justified. These systems represent the next wave of AI value, where software directly interacts with the physical world.Manufacturing lines that detect defects in real time. Autonomous mobile robots navigating dynamic warehouse environments. Agricultural systems that respond instantly to crop conditions. These applications unlock genuine business value by bringing AI capabilities to where physical work happens.But CES also surfaced healthy skepticism.Many physical AI demos glossed over fundamentals like deployment readiness, power consumption, or dependency on cloud connectivity. Belief in physical AI is widespread, but belief alone doesn’t ship products.For physical AI to move from concept to scale, it must be:Deterministic: producing consistent results under varying conditions Efficient: operating within strict power and thermal budgets Cloud-independent: capable of operating without constant connectivityIn short, physical AI only works when inference works. The promise of robots and intelligent systems is constrained by the same reality facing every edge AI deployment: you need reliable, efficient inference that operates in the real world, not just in controlled demonstrations.What We Heard At Axelera AI, we spent CES listening and learning, and yes, showcasing technology. The most common questions we received weren’t about peak performance, but were about practical deployment:How flexible is your SDK, and how much control do developers have over the pipeline? How difficult is it to port an existing model? What does real‑world power consumption look like? Does the system require a full host or can it work with a lightweight one? Can AI workloads run fully offline? Is the supply chain ready for production deployments?These questions signal a market that has matured. Teams aren’t experimenting anymore - they’re planning to ship.The realities of practical deployment came up with independent software vendors (ISVs) who reinforced that while promptable open-vocabulary models are generating a lot of excitement, customers are still relying on traditional closed-set models with fine-tuned datasets.We were able to work around using a vision language model (VLM) by implementing a LLM combined with a segmentation model such as the COCO dataset. There were others on the show floor implementing this same near-term solution because the point is not about cutting-edge research, but instead, production-ready engineering. While we’re excited to add VLM support this year, users can get the results they need today with this simple solution.The lesson is clear. The models that ship aren't necessarily the ones generating papers. They're the ones that work reliably, deploy easily, and deliver consistent results under real-world conditions.Building for the Inference RealityCES 2026 reinforced something we’ve believed for a long time: edge AI success isn’t defined by hype cycles or buzzwords. It’s defined by whether inference survives contact with the real world.The most compelling demonstrations at CES weren't the ones with the most impressive specifications. They were the ones solving actual business problems with measurable ROI.For example, our partner, WebOccult, demonstrated quality control for commercial bakeries, using high-frame-rate cameras and computer vision to detect, classify, and count different products moving down manufacturing lines at 90 frames per second. WebOccult was able to highly customize their solution with the Voyager SDK and complete it within 30 days. These aren't aspirational use cases. They're production systems running today, solving problems that directly impact business operations.Notable systems at CES included:Tooling that works with existing models, not against them Architectures designed for power‑constrained environments Systems that operate reliably without cloud dependencies Transparency around deployment requirementsAs edge AI moves from aspiration to reality, the industry’s focus is shifting from what could be possible to what can be deployed, scaled, and supported. The technologies that succeed won't be those with the highest theoretical performance. They'll be the ones that solve real problems under real constraints.CES didn’t mark the arrival of edge AI. It marked the moment edge AI started being taken seriously.And that’s a far more interesting place to be.

Related products:AI AcceleratorsIndustryTechnology

BlogJan 5, 2026

Axelera AI: Looking Back on 2025

We actually anticipated a roller coaster of a year at Axelera AI in 2025, but in all honesty it’s still caught us by surprise just how much of a ride it’s been. Everything’s happened in such a whirlwind of creative techno-endeavour that it’s hard to even remember what things looked like this time last year. So it feels like the right time to reflect on what’s been accomplished, the partnerships we've built, and where we're headed next.Opening Up: Voyager SDK Goes PublicIf you can believe it, it was only back in March when we took a huge step by making the Voyager SDK publicly available on GitHub. For a company built on making AI accessible to everyone, this felt like the natural thing to do. And the response from the developer community has both proven that to be the right move, and returned a huge groundswell of support from the AI community.Since then, we've shipped four major releases: v1.2.5 (March): The public debut, packed with tools, models, and sample pipelines v1.3 (June): Added experimental LLM support, Windows compatibility, YOLO11 models, and proper thermal management v1.4 (August): Brought YOLOv10, person re-identification, and face recognition v1.5 (November): Ubuntu 24.04 support (I love this one!), Python 3.12, and our 4-chip PCIe cards Each release has increasingly been shaped by feedback from our community. Whether it's debugging customer issues or adding new models to the zoo, the collaboration has been genuinely invaluable. You have driven Voyager forward just as much as we have.Building the Developer ExperienceAlongside the SDK going public, we rolled out the infrastructure to properly support developers, partners, businesses, makers and everyone who’s as excited about AI as we are.We redesigned axelera.ai from the ground up, launched a support portal packed with guides and support docs, published a growing GitHub repo full of examples and tutorials, and opened our webstore so anyone can get their hands on Metis hardware without going through a lengthy sales process.And as you’re aware (since you’re here right now), we built this community that's now home to over 500 AI enthusiasts, members, devs and makers. Watching developers help each other troubleshoot issues, share projects, and push the boundaries of what's possible with Metis has been one of the genuine highlights of the year. There's something satisfying about seeing a Raspberry Pi user in one thread helping solve a problem for someone running a multi-stream industrial deployment in another.These touchpoints have become the foundation of how we’ll engage with AI developers from this year on. The goal was simple: make it easy to get started, easy to find answers, and easy to connect with others doing similar work. Based on the activity we're seeing, it seems to be working.Expanding Platform SupportOne of the quieter, but still vitally important stories of 2025 has been the steady expansion of platforms we officially support.The Raspberry Pi 5 is now fully supported, which was a popular request from makers and prototypers. It was actually a casual side project one of our engineers was tinkering with, but it perfomed so well it kickstarted an enthusiasm across the whole of Axelera to look much more closely at these kinds of SBC form factors. It was the springboard that saw bring-up guides for the Orange Pi 5 Plus and NanoPC-T6 quickly arriving, for those building compact, cost-effective edge deployments.For more demanding applications, we added support for Jetson Orin Nano and Jetson Orin NX, alongside systems running Intel Xeon D and W processors and AMD Ryzen 7 hosts. The Arduino Portenta X8 integration means industrial developers have a proper pathway too.The underlying philosophy here is flexibility. Whether you're a student experimenting on a Raspberry Pi, a startup prototyping on Orange Pi, or an enterprise deploying on industrial-grade x86 systems, we want Metis to just work. Each release has pushed that vision a bit further, and we can honestly say that we arrive at these milestones in exactly the same way you do - we experiement, test, iterate, fails a bunch of times, but find that the ecosystem is ultimately capable of doing whatever we can imagine.New Hardware: From Metis to EuropaOur hardware lineup grew significantly this year, with each addition shaped by what developers actually need.The 4 Quad-Core Metis PCIe card was developed to deliver a real flagship Metis device. One that packs in the inference power without taking up more space in your system. More streams, more models, more throughput. More AIPUs on one board. It's for video analytics watching dozens of cameras, or industrial systems running cascaded pipelines. If you've outgrown a single chip but don't want to rearchitect your software, this is the upgrade path we wanted to deliver.The Metis Compute Board solves a different problem. Too many customers were spending more time on host system integration than building AI applications. So we gave them a complete system: plug in power, connect a display, run inference. It's found its home in rapid prototyping, compact IoT deployments, and anywhere that "it just works" matters most. The CES Innovation Awards recognition was a nice bonus.Metis M.2 Max, announced in September, came from growing demand for LLMs at the edge. The original M.2 is brilliant for computer vision, but generative AI simply needs more memory bandwidth. M.2 Max will deliver that while keeping the same tiny footprint and low power draw, plus it's ruggedised for harsher environments.Then there's Europa, unveiled in October. This is where we're heading next: second-generation AI cores for workloads that blur the line between edge and datacenter. Multiple 4K streams, multi-user generative AI, compute density that previously meant expensive GPU infrastructure. All solved here, as the whole industry moves inevitably towards the edge.We also announced Titania, our AI inference chiplet for high-performance computing, developed as part of the EuroHPC DARE project and aimed at supercomputing applications.Europa and Titania won't ship until 2026 and beyond, but 2025 was the year they came to life. Even as we shipped Metis products to customers, our hardware teams already had an eye on what needs to come next.Funding and SupportIn March, we secured €60 million in funding from the EuroHPC Joint Undertaking and member states. This wasn't just money though; it was validation that Europe is serious about building sovereign AI infrastructure. Combined with our previous Series B funding, we've now raised over $200 million. That’s a lot of faith that the sector and the region have placed in Axelera AI.We were also selected for the EIC Step Up programme, which provides significant additional equity investment to help scale operations. As Bloomberg reported in August, we're deep in discussions for an additional round to expand our edge AI and datacenter activities even further next year.Building an EcosystemOne of the highlights of 2025 was formalising partnerships that genuinely matter. Not just to us, but to the European AI sector in general.Our collaboration with Arduino, announced late last year, came to life at CES in January. Pairing our Metis accelerators with Arduino's Portenta modules means millions of developers can now access proper AI acceleration in a familiar environment. It's exactly the kind of democratisation we set out to achieve from day one.In June, we launched the Partner Accelerator Network with founding members including Aetina, Arduino, Astute, C&T Solution, Eurocomposant, Macnica, and Seco. This isn't just a logo wall to fancy up our website, by the way. It's a actual, active ecosystem of partners helping customers move from proof-of-concept to production. It’s the bedrock of what Axelera’s building.The partnership with the European Space Agency deserves a special mention, too. ESA chose Axelera because of our sovereign technology and long-term availability. When your hardware might spend a decade in space, those things matter. It's humbling to think our chips could help answer some of the universe's biggest questions (and it’s just cool as hell to have your gear in orbit!).We've also deepened ties with Lenovo, Dell, Advantech, and Micron, and validated our platform on BalenaOS for fleet deployments. And most recently, we teamed up with YOLO model creator Ultralytics, in what’s set to be an epic leap forward now our cutting-edge acceleration is seamlessly integrated into the Ultralytics models. You can see more about that in the video below.Showing UpWe've been crazy busy at industry events this year too. Highlights include: CES 2025 in Las Vegas, where we were named a CES Innovation Awards Honoree for the Metis Compute Board Embedded World 2025 in Nuremberg, showcasing solutions with duagon and Arduino ISC West 2025, where we demonstrated real-time YOLOv8l inference on 8K video COMPUTEX 2025 in Taiwan, displaying our growing partner ecosystem Web Summit 2025 in Lisbon, discussing sovereign AI We've also participated in important European policy discussions, including the AI Continent Action Plan launch in Brussels, the French AI Action Summit, and the State of Dutch Tech.What It All MeansLooking at 2025 in totality, a few themes emerge.First, edge AI is real and 2025 is the year it arrived for everyone. The gap between what's theoretically possible and what actually works in production environments is rapidly closing. Our customers are deploying real solutions for retail, manufacturing, security, transportation and more.Second, European tech sovereignty matters. The funding, the partnerships, the policy engagement. There's a genuine momentum behind building AI infrastructure that isn't dependent on a single geography or vendor.Third, and most importantly, none of this happens without the community. The developers filing issues on GitHub, the partners building solutions, the customers pushing us to do better. Thank you all for joining us.What's NextEuropa ships in 2026. The M.2 Max won’t be far behind. Titania is well on track, too. We'll keep improving Voyager SDK based on what you tell us you need. And we'll keep working to make AI accessible, efficient, and genuinely useful.As our CEO Fabrizio likes to say: it's still day one. The best is yet to come.

Related products:Company

BlogNov 12, 2025

The Hidden Third Dimension of Edge AI: Why Infrastructure Origin Matters