The AI industry has spent the last few years in a language arms race. Bigger LLMs, faster token generation, more natural conversation. The implicit assumption is that the pinnacle of human-machine interaction (HMI) is getting a computer to understand and respond to our words. But the more projects I see here on the Axelera AI community, the less essential that object seems to be.

I’ve become a bit fascinated not just by HMI systems, but HAII (human-AI interaction, if there is such a designation), and here's a number worth sitting with that I found on my travels.

Human speech transmits roughly 39 bits of information per second.

That's consistent across every language ever studied. Meanwhile, visual processing handles around 10 million bits per second. We've spent billions of dollars optimising AI for our slowest, most ambiguous communication channel, and largely ignored the one that's 250,000 times faster.

The race to shrink a 100-billion-parameter language model onto an edge device makes for great headlines. But a camera connected to an AI accelerator isn't just a language-free alternative to a chatbot. It's a multifaceted sensor that can see gestures, read posture, understand spatial context, detect presence, interpret movement patterns, and recognise expressions, all simultaneously, all in real time, all without a single spoken word.

And people in this community are already building exactly that, which is what led me down this post-linguistic rabbit hole in the first place.

Machines Don't Care How You Talk to Them

We tend to assume language is the ultimate interface because it's the ultimate interface for us. It's how we coordinate, build culture, tell stories. But machines have no such preference. A pointed finger, a nodded head, a change in posture, or simply walking into a room are all equally valid inputs. At least as far as an AI model is concerned. In many cases, they're better inputs. Less ambiguous, faster to process, and impossible to misspell.

Check out how

And it works at three meters, which is more than most people can say for their voice assistant. Especially now that Alexa is being deliberately lobotomised to sell more Prime memberships, but that’s a rant for another day.

We Already Know Language Isn't Required

This isn't even a new idea. We've just forgotten. Babies communicate through gesture months before they can speak. Ursula Bellugi's landmark study at the Salk Institute found that sign language conveys the same information as speech using 42% fewer units, because signing exploits three spatial dimensions simultaneously rather than the single timeline of spoken words. Koko the gorilla learned over 1,000 signs and invented compound terms like "finger-bracelet" for ring. Dogs are now using communication buttons to construct multi-word phrases. Language is one tool among many, and not always the sharpest.

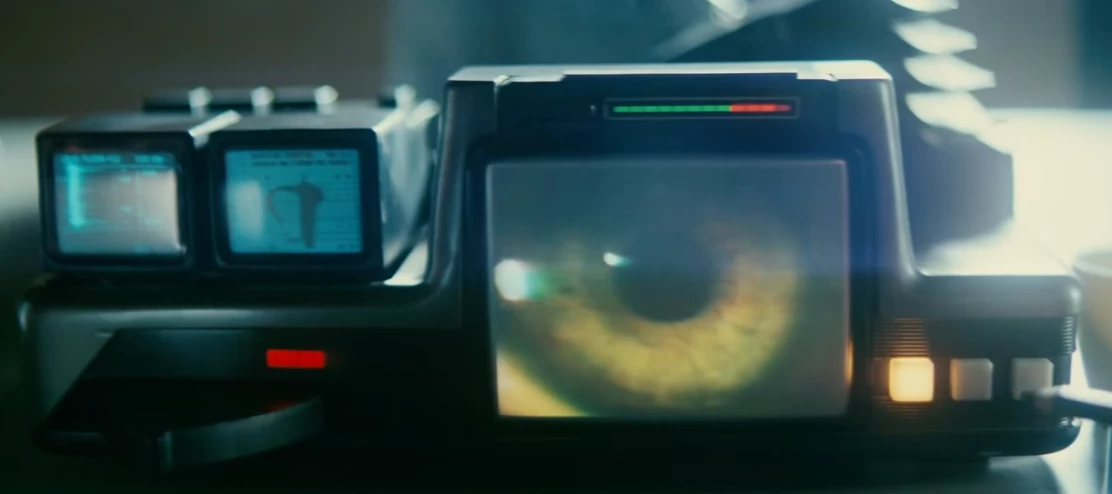

Sci-fi has been rehearsing this transition for decades. (Yeah, anyone who knows me has been wondering how long it’d take before I got all sci-fi about this. Whatevs 😏 ). Minority Report gave us gesture-controlled data manipulation. Tony Stark's workshop combines hand tracking, gaze direction, and spatial awareness. Blade Runner 2049's Joi doesn't wait for commands; she detects proximity, reads emotional context, and adapts. The most interesting fictional interfaces are never about speaking louder. They're about machines that understand posture, gaze, movement, and context. That understand what humans are doing, not saying.

This community is already exploring that same territory. Air Piano turns finger tracking into musical expression, no instrument required. Air Steering replaces a physical game controller with gesture recognition. Rock Paper Scissors Lizard Spock demonstrates that edge AI can reliably distinguish between five similar hand shapes in real time, which is the foundation for building entire gesture vocabularies. And

The Quiet Revolution: Machines That Just Understand

The most radical version of this idea isn't gesture control at all. It's the elimination of explicit commands entirely.

MotionFlow reimagines the humble motion sensor. Instead of "something moved," it distinguishes between people, pets, and environmental motion, triggering context-aware automations based on who is where and what they're doing. You don't tell the house to turn on the lamp. The house sees you've sat down in the reading chair with a book and just does it.

Is that communication? In the strictest sense, yes, I think it is. It’s framed around system control, but it starts with communication. You've conveyed information ("I'm here, I'm settling in, I need light") and received a response (the lamp turns on), all without forming a single conscious thought about it. That's a higher bandwidth interaction than asking Alexa to do something (three times), and it happened in zero words.

Elderly Guardian takes this further into healthcare. Pose estimation that detects falls, unusual inactivity, or emergencies through passive observation. RehabVision tracks rehabilitation exercises and assesses form through body movement alone. These aren't gimmicks. They're real applications where language would actually be a worse interface. And it’s early days for all these HAII systems. Okay, right now the AI just reacts, but it could just as easily convey a response in an equally post-linguistic method, if we have the creatitivy to think beyond language.

The Edge AI Angle Everyone's Missing

So I don’t think we need a 70-billion-parameter model to wave at a lamp. You need a camera, a well-trained vision model, and an AI accelerator with enough performance to run inference in real time, locally. That's it. No cloud round-trip, no transcription pipeline, no natural language understanding stack, no token generation. Just perception, action and response. We get asked a lot about LLMs on Metis and Europa, which I totally get - the AI sector is still hung up on that hook. We want LLMs, but I’m not sure we’re stopping to ask if we need them yet. That day’s coming, though.

This is where edge AI gets genuinely interesting. Not as a way to run a slightly worse chatbot locally, but as the enabler of entirely new interaction paradigms that cloud-based language models can't touch. Real-time gesture recognition needs sub-20ms latency. Presence detection needs to be always-on and private. Pose estimation for healthcare can't send video to a server. These are fundamentally edge workloads, and they represent a vision of AI that's arguably more transformative than a better autocomplete. What we’re lacking is a communication system for that same vision, context, presence or sensor-based AI to respond in ways we’ll understand or, more accurately, accept.

We're currently saying "please" and "thank you" to chatbots (which, by the way, Sam Altman once said costs OpenAI tens of millions of dollars annually in compute!). There's nothing wrong with being polite. In fact, it’s good practice as a human being. But it does epitomise how we've imported all the overhead and inefficiency of human social conventions (organic bloatware) into a relationship that doesn't need them.

So maybe the future isn't about getting machines to understand our language better. Maybe it's about realising and ultimately accepting that they never needed it in the first place.

What do you think? Are there other interaction modalities you'd like to explore? Have you built something that communicates with AI without words? Drop your thoughts below!