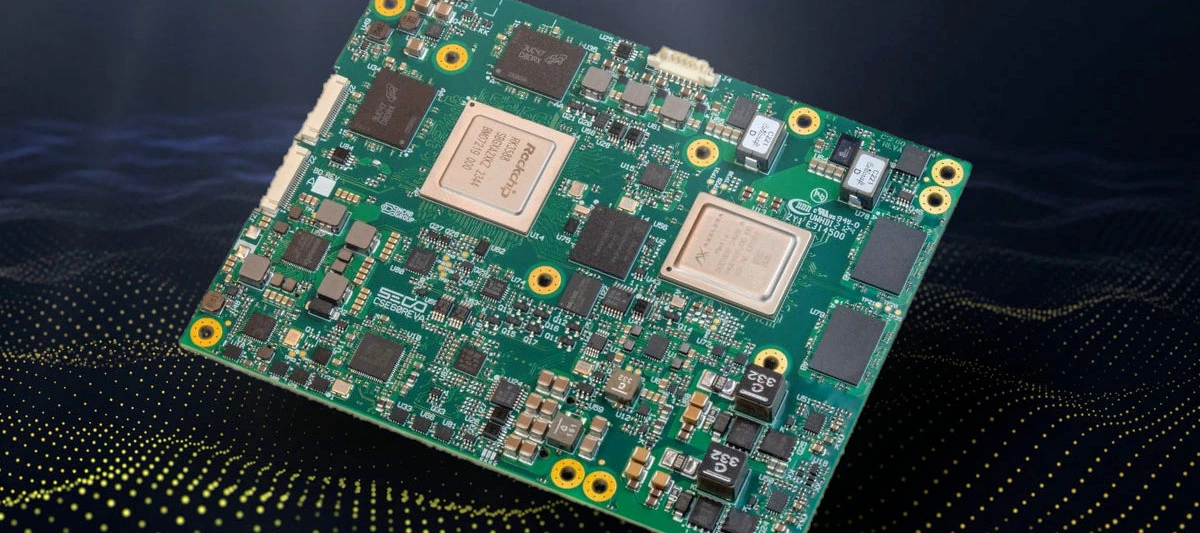

Great piece from SECO that showcases what’s possible when you combine the Metis AIPU with their SOM-COMe-BT6-RK3588 module. It’s a solid breakdown of how edge-native AI hardware is reshaping vehicle inspection, both in manufacturing and post-sales scenarios like insurance claims.

The setup:

-

Rockchip RK3588 (4x Cortex-A76 + 4x Cortex-A55)

-

Metis AIPU delivering up to 214 TOPS

-

Up to 8 HD video feeds processed in parallel

-

Real-time object detection + OCR

-

<50ms latency per stream, at the edge

No cloud. No data back and forth. Just low-latency, high-throughput inferencing where the data is created.

They also go into the pipeline architecture: camera -> local memory -> CPU/VPU pre-processing -> AIPU for inference -> post-process -> system output; and how this gets mapped to Metis efficiently.

And they’re running YOLOv8S on 8x 1080p60 streams with room to spare, which is not unimpressive!

It’s a solid reference if you’re thinking about multi-camera setups, inspection tasks, or embedded deployments with real constraints around power, latency or network.

👉 Here’s the blog link if you want to check it out

Also cool to see how well this aligns with the workflows we’ve been using in the Voyager SDK, from GStreamer pipelines to YAML-based deployment and quantised model optimisation.

It’d be interesting to hear how others are approaching similar challenges or if anyone’s played around with VC aggregation (virtual channels) in their own builds.