Picture this: You’re heading to the mall with the whole family. The kids are excited in the back seat, already arguing over who gets to pick the first activity once you’re inside. You pull into the massive parking lot… and the nightmare begins.

You start circling. Row after row. Every spot seems taken. The excitement in the car slowly turns into frustration. “Are we there yet?” becomes “Why is this taking so long?” The kids grow restless, voices rising, while you keep looping around hoping for that one open spot. Minutes tick by. Fuel burns. Patience runs thin. What should be a fun family outing starts with unnecessary stress — all because no one knows where the empty parking spots actually are.

SmartPark solves exactly that frustration.

It’s a complete local-first, edge-AI smart parking system built to run on the Axelera Metis. Using real-time computer vision, it detects open parking spots instantly and gives operators (and eventually drivers) clear, live visibility into the parking lot.

The Problem It Solves

- Drivers waste precious time, fuel, and patience endlessly circling for parking.

- Mall and parking operators have little to no real-time visibility across their lots.

- Existing solutions are often expensive, cloud-dependent, and slow to respond.

SmartPark fixes this with a fast, private, and efficient edge-based solution that runs entirely on affordable hardware.

Cost Comparison: SmartPark vs Traditional Systems

One of the biggest advantages of SmartPark is its dramatically lower total cost of ownership compared to legacy solutions.

| Aspect | Traditional Parking Sensors (per-spot) | SmartPark (RTSP Cams + Orange Pi + Metis) | Camera System on Mac Mini |

|---|---|---|---|

| Hardware Cost (per 100 spots) | $30,000 – $50,000+ (1 sensor per spot + gateways) | $800 – $1,800 (4–6 cheap RTSP cams + Orange Pi + Metis card) | $2,500 – $4,000 (cams + Mac Mini) |

| Installation | Very High (drilling, wiring per spot, lot closures) | Low (mount cams on poles/lights, minimal cabling) | Medium (cams + running cables to central Mac) |

| Power & Connectivity | High (many sensors need individual power/SIMs) | Very Low (edge device handles multiple streams) | Medium-High (Mac Mini is power-hungry) |

| Maintenance | Medium-High (sensor failures, battery replacements) | Low (standard IP cams are reliable) | Medium (Mac Mini upkeep + OS updates) |

| Scalability | Expensive (linear cost per added spot) | Excellent (add cams cheaply, one Metis handles many streams) | Good but limited by single machine |

| Privacy & Cloud Dependency | Often cloud-based, privacy concerns | Fully local-first, no cloud needed | Can be local but heavier setup |

| AI Performance | Basic counting only | Full YOLO spot detection + classification (EV, accessible, etc.) on Metis | Strong but higher power draw |

SmartPark wins on cost-efficiency while delivering richer data (spot-level details, filters for EV/accessible spots, analytics) thanks to the Axelera Metis running efficient YOLO inference at the edge.

How It Works

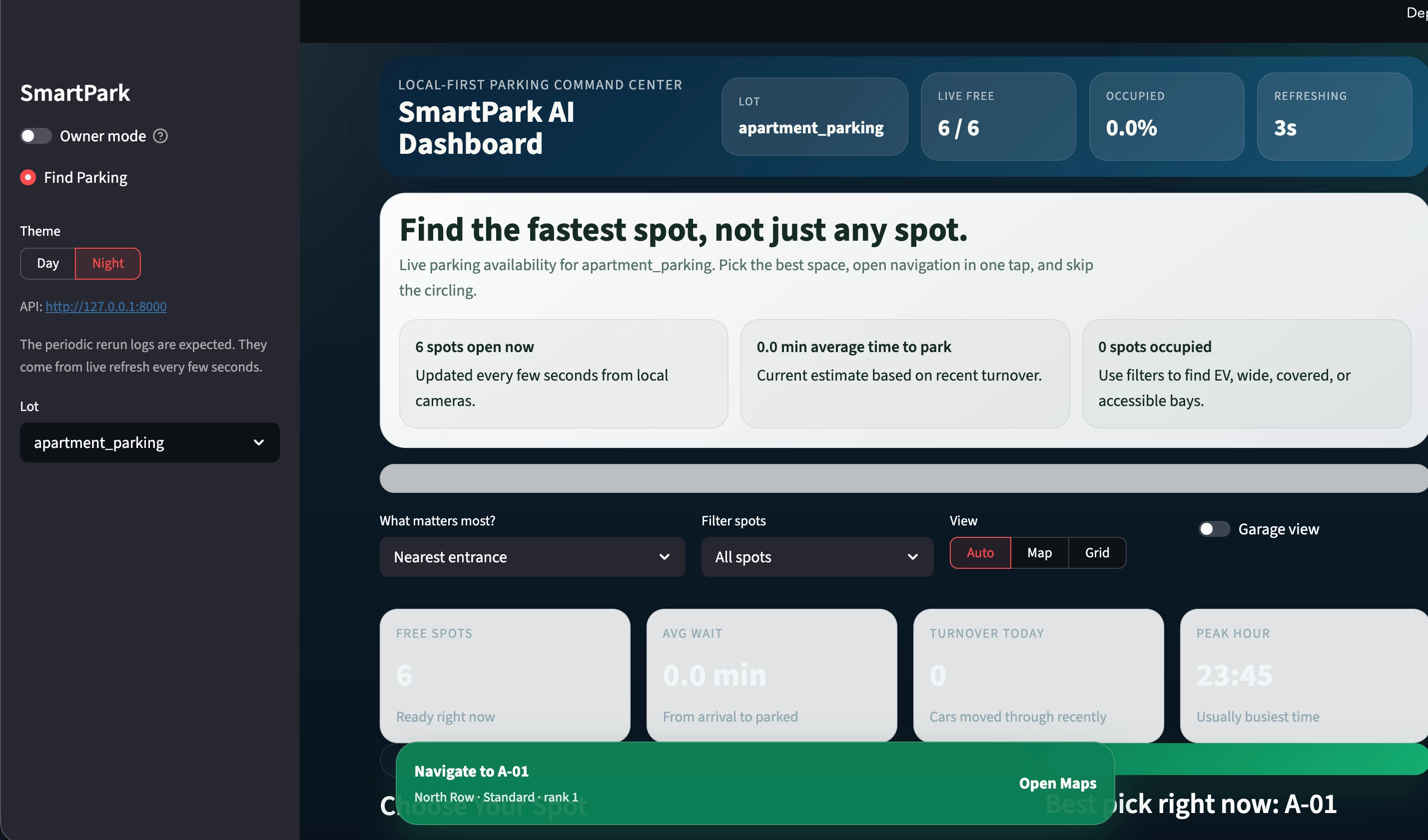

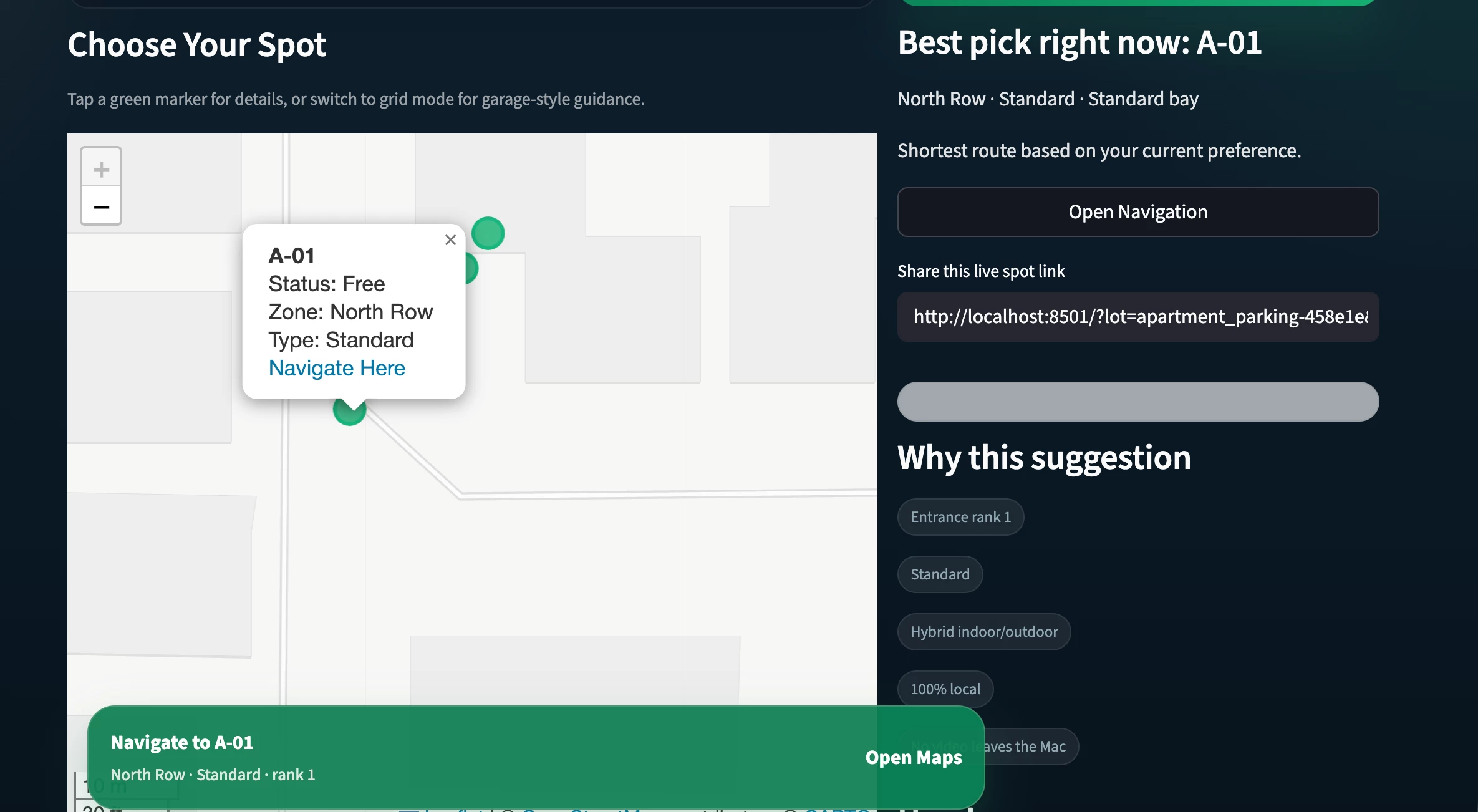

- Metis-Powered Vision A YOLO-based ParkingManagement model runs efficiently on the Axelera Metis AIPU using the Voyager SDK. Multiple RTSP camera feeds are processed in real time to detect occupied vs. free parking spots. Only changed spot states are sent forward, keeping everything lightweight and responsive.

- Local-First Backend

- FastAPI with WebSocket support for live updates

- SQLite as the lightweight, zero-config database

- Simple incremental ingestion endpoint (POST /ingest/slots) for easy integration

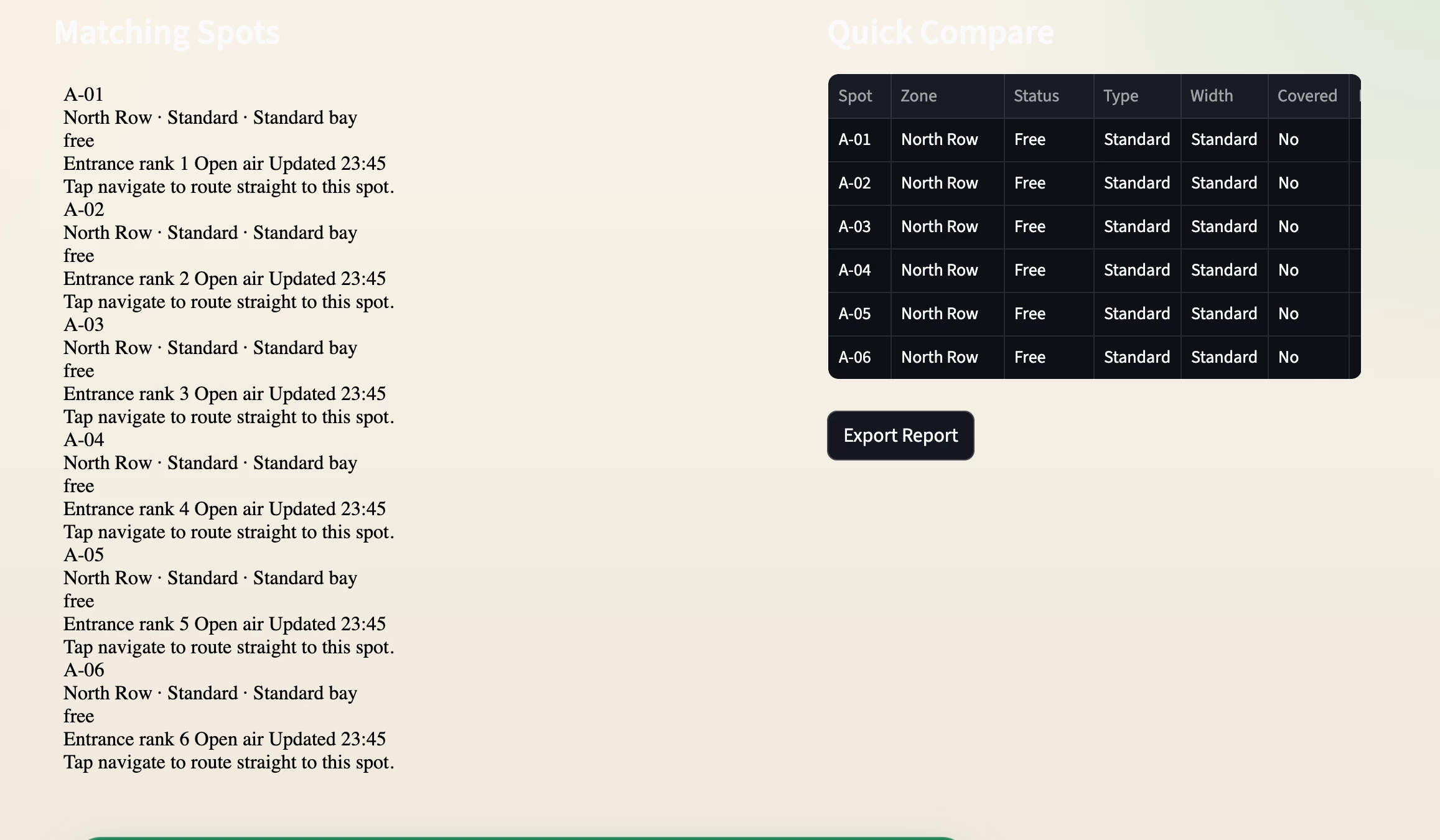

- Operator Dashboard (Streamlit)

- Live occupancy overview with beautiful charts and lot maps

- Filters for EV charging spots, accessible spots, wide spots, and covered areas

- Real-time analytics: trends, estimated wait times, turnover rates, and peak hours

- CSV export for reports and snapshots

- Camera health monitoring and admin tools to manage lots or simulate data

- Alert system for low capacity, camera issues, or operational events

The entire system seeds with realistic demo data, so you can spin it up instantly and see live updates even before connecting real cameras.

Tech Stack Highlights (Metis Edition)

- Axelera Metis + Voyager SDK → High-performance, low-power YOLO inference for multiple camera streams

- Python / FastAPI / WebSockets → Responsive real-time API layer

- Streamlit → Clean, intuitive operator dashboard

- SQLite → Simple and reliable local persistence

- YOLO worker → Clean separation between vision processing and the dashboard

Quick demo command to try it:

Bash

python -m smartpark.worker demo --lot-id westfield-top-deck --cycles 10 --poll-seconds 2I built SmartPark to show how the Axelera Metis can solve everyday real-world problems with efficient edge AI. Instead of families wasting time circling parking lots in frustration, SmartPark helps them find a spot quickly so the fun can start sooner — all at a fraction of the cost of traditional systems.

Repo: https://github.com/shashibhat/SmartPark (Complete setup instructions, API docs, and demo data included)

I’d love to hear feedback from the Axelera community — especially from anyone working with multi-camera YOLO pipelines on Metis. Feel free to try it out and let me know what you think!

Let’s make endless circling for parking a thing of the past. 🚗✨

#AxeleraMetis #DemoJam #SmartParking #EdgeAI #YOLO

Thank you for the opportunity

Regards,

Shashi