A camera sees everything and understands nothing. For decades, that has been the fundamental limitation of physical security at scale: vast amounts of footage, limited ability to act on it in real time. The gap between what a camera captures and what a security team can actually respond to is where incidents happen. Edge AI closes that gap.

At ISC West 2026, we built a working example of exactly that: a live, multi-camera edge AI security system running in a crowded convention space, tracking persons of interest, detecting novel threats in real time, and keeping every frame of footage on-site. Here is what it looks like.

The problem is not the cameras. It is what happens after.

Modern physical security operations are sitting on a significant and largely untapped asset: high-resolution camera infrastructure that covers more ground, captures more detail, and retains more data than any previous generation of technology. The hardware investment is already made. The feeds are already live.

The challenge is that more cameras have not made the security team's job easier. They have made it larger.

According to a 2024 survey by MSSP Alert and CyberRisk Alliance, 62% of security alerts are ignored entirely. When every feed is an equal priority, none of them is. Security teams at major airports, sporting venues, and large-scale public events are experienced professionals making triage decisions under pressure, and those are decisions AI should be supporting, not leaving entirely to human judgment.

The financial picture compounds this. Legacy approaches to scaling security have been linear and expensive:

-

More cameras means more storage, more bandwidth, more cloud processing costs

-

More data means more staff hours reviewing footage after the fact

-

More cloud processing means more exposure to the governance questions that compliance and legal teams are increasingly being asked to answer

And that third point has a way of becoming the most expensive one. Moving video footage and biometric data to the cloud creates regulatory exposure that is tightening globally. Data residency requirements, biometric privacy laws, and AI governance frameworks are evolving faster than most enterprise procurement cycles. Every frame that leaves the building is a question your legal team may eventually have to answer.

A note on getting it wrong: The cost of failure in security AI runs in both directions. False positives disrupt operations, damage trust in the system, and create the kind of alert fatigue that leads operators to start ignoring alerts altogether. False negatives are worse. Confidence in the system's outputs, not just its speed, is what separates a tool security teams rely on from one they work around.

Why most edge AI platforms ask you to make a trade-off

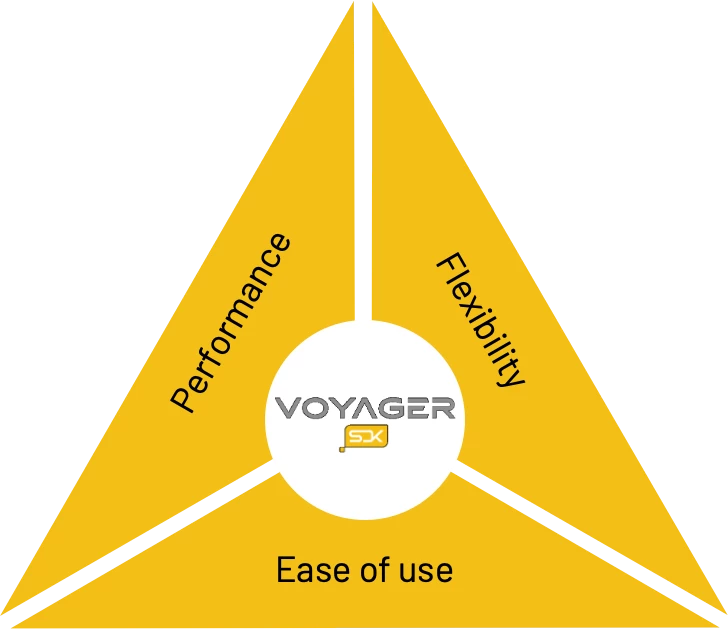

The embedded vision industry has spent years normalising a problem it has not fully solved. Delivering a capable edge AI platform means satisfying three requirements simultaneously:

The embedded vision trade-off most platforms ask you to accept:

Performance: the processing power to run multiple AI models across multiple high-resolution streams in real time

Ease of use: the ability to integrate with existing infrastructure without a specialist engineering team and a six-month timeline

Flexibility: the capacity to extend the system as requirements evolve, add new capabilities, and integrate with the partner ecosystem you have already built

Most platforms deliver two. The missing one surfaces during the proof of concept, and the cost of that gap is measured in the months of engineering work it takes to get from a working demo to a production deployment.

For example, GStreamer requires specialist expertise that most teams have to hire or train for, adding cost and delay before a project even begins. PyTorch is accessible but underperforms on real edge hardware. Most edge AI vision SDKs work well within their predefined boundaries, and extending them beyond that set is where timelines and budgets tend to slip.

The Metis® AI Processing Unit was designed from the ground up for inference at the edge: high parallelism, low power draw, purpose-built for the multi-model, multi-stream workloads that real security environments generate. The Voyager® SDK was built alongside it, not bolted on afterward, which is why the two function as a system rather than as components that happen to be compatible.

Critically: this architecture works with existing camera infrastructure. New compute does not mean new cameras.

From detection to decision: what it looks like when it works

At ISC West 2026, Axelera® AI is demonstrating exactly what that looks like in practice. Here is what is showing:

-

Three camera streams (two 8K and one 4K) feeding simultaneous live video into a single edge system, running on an ORIGIN L-Class V2 workstation PC with an Intel Xeon W7-3565X 32-core processor

-

Multiple AI models running in parallel across three PCIe cards, each housing four Metis AIPUs

-

Person-of-interest tracking that goes well beyond simple detection:

-

As a subject moves through the crowd, the system continuously builds a profile of who they are, drawing on multiple frames and camera angles over time to improve certainty as better quality images become available

-

The system is constantly improving its view of each subject, holding onto only the best angles and sharpest images as they become available.

-

The system only needs one clear moment. If a subject glances toward a camera even briefly, that single high-quality sighting is enough to trigger an immediate, confirmed identification.

-

Person and face detection run in parallel on every frame, maintaining tracking even when a subject's face is obscured or their back is turned

-

-

Novel threat detection using a custom-trained model built on synthetic data, to catch what metal detectors cannot. Synthera generated thousands of synthetic training images of a plastic prop weapon using its Chameleon platform, and Innowise used that dataset to train and benchmark the right Ultralytics object detection model for this solution

-

Face detection and recognition powered by Digica, engineered for high-precision accuracy and demographic parity across diverse races, genders, and age groups to ensure reliable identification in any environment

-

PPE detection powered by SpanIdea, identifying safety helmets and high-visibility vests in real time to distinguish between attendees and staff on the show floor

-

Automated incident reporting pushed to remote response systems the moment an alert triggers

-

Four ISV partner models integrated into one pipeline using the Voyager SDK, with no bespoke middleware between them

Raw footage never leaves the building. When a confirmed threat triggers a remote response, only the relevant event data is transmitted.

Catching a threat is only half the problem. Getting the right information to the right person fast enough to do something about it is the other half.

Consider what a large-venue security team actually needs from an AI system during a live event with tens of thousands of people moving through the space. The system needs to maintain awareness across the entire environment simultaneously, surface only the detections that warrant human attention, and give the operator enough context to act immediately rather than investigate.

Here is how that plays out in practice:

-

A subject enters the environment. Tracking begins across every subject as they move between all cameras in the venue.

-

As the subject moves through the space, the system continuously builds a profile of who they are, drawing on multiple frames and camera angles over time to improve certainty as better quality images become available.

-

An operator is notified when the system achieves a reasonable confidence that the profile matches a person of interest (or person on their watchlist). The alert arrives with context: a high-resolution crop of the subject, their position in the live feed, and a clear confidence indicator.

-

If a weapon or object of concern is detected, the alert escalates immediately, triggering a visible and audible alarm at the security station and simultaneously pushing an automated incident report to remote response systems for follow-up.

The system commits fast and refines over time. An initial identification is made as soon as the evidence supports it, and the confidence picture continues to improve as additional data becomes available. A single poor-quality frame does not define the result. Neither does a momentary occlusion.

The non-metallic threat problem. One capability worth calling out: the threat landscape for large venues has evolved beyond what traditional metal detectors were designed to catch. Composite materials, plastic-based weapons, and purpose-built items can evade conventional screening entirely. AI-based detection can be trained for these threat categories before they are encountered in the field, using synthetic data to build detection capability ahead of real-world exposure. With synthetic training data, the system can be trained to detect novel weapons from their 3D CAD designs, before a physical version ever exists. That is a meaningfully different level of preparedness.

The platform is only as capable as what can be built on it

Most enterprise security teams already have specialised tools they depend on and ISV partners they trust. The question is never whether to use them. It is whether the platform can bring them all together.

At ISC West 2026, we built a live demonstration with four ISV partners, each integrating their own specialist capabilities into a single working system using the Voyager SDK:

| Partner | Capability |

|---|---|

| Synthera | Synthetic training data generation for novel and non-metallic threat categories |

| Innowise | Trained multiple Ultralytics models using a combination of synthetic and real-world data, then benchmarked to identify the model delivering the best performance-accuracy tradeoff on Metis hardware |

| Digica | Face detection and recognition engineered for demographic parity across diverse populations |

| SpanIdea | PPE detection for real-time classification of individuals by role and compliance status |

None of these integrations required bespoke middleware. Each partner used the same modular pipeline framework. The result is composable: capabilities from different partners work together in a single pipeline, and new integrations add to the foundation without displacing what is already there.

For a buyer making a multi-year infrastructure decision, this matters as much as any hardware specification. Requirements evolve. New threat categories emerge. Regulatory expectations shift. A platform that extends through a growing ecosystem of partners means the initial investment does not become technical debt when any of those things happen.

The bottom line

Here is how this demonstration addresses the issues we started with.

On cost and infrastructure: In this demonstration, three PCIe cards, each housing four Metis AIPUs, are deployed. Because each AIPU contains four independently programmable cores, the configuration delivers a total capability of 48 parallel AI model instances at a typical power draw of 30 to 58 watts per card. Compared to GPU-based alternatives, the difference in power consumption, cooling requirements, and operational cost at scale is substantial. Edge processing also reduces the bandwidth and cloud compute costs that make legacy approaches increasingly expensive to operate as deployments grow.

On governance and compliance: Raw high-resolution footage stays local. Only actionable metadata and event notifications move to the cloud or to remote response systems. The architecture is the compliance answer: not a policy statement that sits alongside the technology, but a structural property of how the system processes and moves data.

On the security team: The system is designed to reduce alert fatigue by surfacing high-confidence, context-rich notifications rather than generating volume. Operators receive the information they need to make a decision, not a queue of detections to work through. The job does not get smaller. It gets manageable.

The physical security challenge is universal, and our blueprint for addressing it is already scaling. From the retail floor to the front lines of defence, we are bringing precision-engineered edge AI performance to the industries that need it most.