Bert Moons | Director – System Architecture at AXELERA AI

Summary

Convolutional Neural Networks (CNN) have been dominant in Computer Vision applications for over a decade. Today, they are being outperformed and replaced by Vision Transformers (ViT) with a higher learning capacity. The fastest ViTs are essentially a CNN/Transformer hybrid, combining the best of both worlds: (A) CNN-inspired hierarchical and pyramidal feature maps, where embedding dimensions increase and spatial dimensions decrease throughout the network are combined with local receptive fields to reduce model complexity, while (B) Transformer-inspired self-attention increases modeling capacity and leads to higher accuracies. Even though ViTs outperform CNNs in specific cases, their dominance has not yet been asserted. We illustrate and conclude that SotA CNNs are still on-par, or better, than ViTs in ImageNet validation, especially when (1) trained from scratch without distillation, (2) in the lower-accuracy <80% regime, and (3) for lower network complexities optimized for Edge devices.

Convolutional Neural Networks

Convolutional Neural Networks (CNN) have been the dominant Neural Network architectures in Computer Vision for almost a decade, after the breakthrough performance of AlexNet[1]on the ImageNet[2] image classification challenge. From this baseline architecture, CNNs have evolved into variations of bottlenecked architectures with residual connections such as ResNet[3], RegNet[4] or into more lightweight networks optimized for mobile contexts using grouped convolutions and inverted bottlenecks, such as Mobilenet[5] or EfficientNet[6]. Typically, such networks are benchmarked and compared by training them on small images on the ImageNet data set. After this pretraining, they can be used for applications outside of image classification such as object detection, panoptic vision, semantic segmentation, or other specialized tasks. This can be done by using them as a backbone in an end-to-end application-specific Neural Network and finetuning the resulting network to the appropriate data set and application.

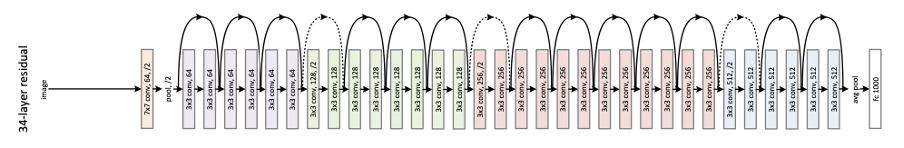

A typical ResNet-style CNN is given in Figure 1-1 and Figure 1-4 (a). Typically, such networks have several features:

- They interleave or stack 1×1 and kxk convolutions to balance the cost of convolutions with building a large receptive field,

- Training is stabilized by using batch-normalization and residual connections.

- Feature maps are built hierarchically by gradually reducing the spatial dimensions (W,H), finally downscaling them by a factor of 32x.

- Feature maps are built pyramidally, by increasing the embedding dimensions of the layers from the range of 10 channels in the first layers to 1000s in the last

Figure 1-1: Illustration of ResNet34 [3]

Within these broader families of backbone networks, researchers have developed a set of techniques known as Neural Architecture Search (NAS)[7] to optimize the exact parametrizations of these networks. Hardware-Aware NAS methods automatically optimize a network’s latency while maximizing accuracy, by efficiently searching over its architectural parameters such as the number of layers, the number of channels within each layer, kernel sizes, activation functions and so on. So far, due to high training costs, these methods have failed to invent radically new architectures for Computer Vision. They mostly generate networks within the ResNet/MobileNet hybrid families, leading to only modest improvements of 10-20% over their hand-designed baseline[8].