The importance of software for unlocking the value of artificial intelligence cannot be overstated: the most powerful hardware in the world is just a paperweight without a usable software stack. At Axelera AI we’ve built the Voyager Software Development Kit (SDK) to give developers and ML engineers a simple solution for developing and deploying AI. Today, we’re excited to share that the Voyager SDK is now publicly available on our GitHub page.

Introduction

The Voyager SDK is an end-to-end integrated software stack for Axelera AI’s inference platform which has been designed for performance, efficiency and ease of use. It enables developers to deploy pre-trained machine learning (ML) models, and to construct end-to-end optimized application pipelines quickly and easily. Whether you have trained your own model weights, are using an open source model with pre-trained weights, or want to build on one of the models offered in our model zoo, Voyager provides an effortless path to deploy and evaluate a model on Axelera AI’s hardware platforms, to build an inference pipeline using it and to integrate the pipeline into your application logic.

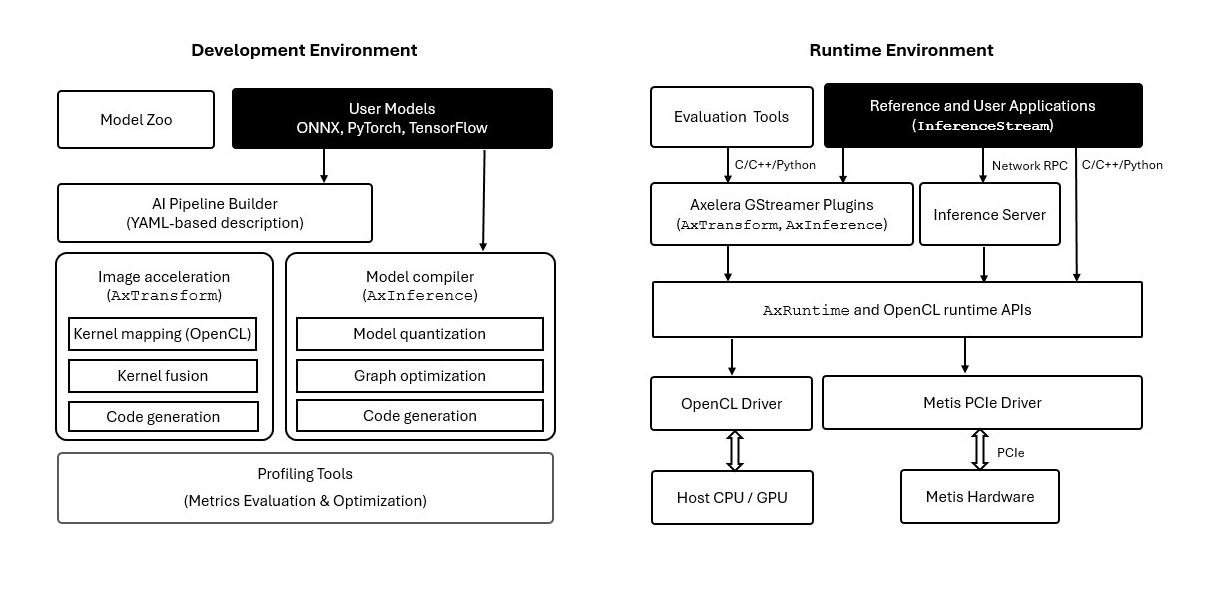

Voyager SDK offers a development environment where the developer deploys a model, measures its accuracy and performance and integrates it into an application pipeline. It also offers a runtime environment (i.e., a runtime stack) that offloads the execution of the pipeline when the application runs on an edge system. The two environments are logically separate but can also co-exist on the same system.

What is included in Voyager SDK?

We distribute the latest version of the Voyager SDK as a GitHub repository which,among other content, offers the following:

- An automated installer for the core binary packages of the SDK, including native packages and Python wheels. For certain packages, such as the Linux kernel driver, native source code is available as well. The installer can be used to install the developer environment, the runtime environment or both.

- Source code for the AI pipeline builder, image acceleration libraries, GStreamer plug-ins, inference server and model evaluation infrastructure.

- Comprehensive documentation to support developers using our platform. Our documentation covers general topics such as installation, getting started and performance benchmarking, as well as tutorials on more specific topics such as deployment of custom model weights. Additional documents specific to various host platforms and upgrade instructions will be available in our customer portal.

- A model zoo of optimized models, including dozens of models for tasks such as image classification, object detection, semantic segmentation, instance segmentation and keypoint detection. As we optimize the performance and accuracy of new models, our model zoo will be expanding continuously with additional models and use cases.

- Multiple sample pipelines and applications that exemplify the use of our stack and can help streamline development and speed up time-to-market.

Performance

Recently we published a blog post on the performance and accuracy achieved with benchmark computer vision models on Metis AI processing units. Those tests were run using the software we are releasing today, so you can reproduce those benchmarks for yourself and, more importantly, take advantage of the performance of Metis in your applications. For the latest benchmarks and performance numbers, please visit here.

Why Now?

Axelera AI was founded on the principle that everyone should have access to leading edge inference capabilities. We believe openness is the best way to empower developers and we are thrilled to have reached this milestone. For over six months our customers have been using Voyager, providing us feedback, and helping shape our roadmap. We look forward to now broadening both the access and the feedback through our online community.

Interested in Getting Involved?

Our team is committed to fostering a collaborative environment by encouraging open source contributions. Developers can submit new pipelines or improvements via pull requests, which will be reviewed and potentially integrated into the repository. With this approach we aspire to enhance the quality and reach of our SDK and build a vibrant community of contributors.

Make sure you’re signed up here at the Axelera AI community to discuss your projects, ask questions, and support your fellow developers.

Still don’t have a Metis inference accelerator? Get one today.